A penetration test creates value only when the report changes engineering behavior. Scans, screenshots, and raw findings are not the deliverable. The deliverable is a clear decision document that helps teams fix risk quickly and verify that risk is actually reduced.

Strong reporting is a skill separate from testing. You can discover critical issues and still fail the engagement if impact is vague, evidence is weak, or remediation is not usable by developers.

How to write a pentest report

Use this framework to produce reports that are technically sound, business-relevant, and remediation-ready.

1) Why the report is the real deliverable

- It translates testing into prioritized security action

- It aligns engineering, security, and business stakeholders

- It documents risk posture at a specific point in time

- It becomes evidence for compliance and governance reviews

- It creates the baseline for retest and security improvement cycles

A good pentest without a clear report becomes expensive noise.

2) Write for multiple audiences in one document

Different readers care about different decisions. The same finding must be readable at multiple levels.

| Audience | Main Question | What They Need |

|---|---|---|

| Executive Leadership | “What business risk exists and what is priority?” | Risk themes, severity distribution, timelines, ownership |

| Technical Owner / Team Lead | “What system is affected and what should we fix first?” | Asset mapping, practical remediation plan, sequencing |

| Developers / Engineers | “What exactly failed and how do we correct it?” | Repro context, evidence clarity, implementation guidance |

| Compliance / Risk Team | “Is risk documented and tracked with accountability?” | Scope, method, severity rationale, retest status, references |

A single report should support all of these decisions without duplicating content excessively.

3) Full report structure that works in real projects

Keep structure consistent across engagements so reviewers can navigate quickly.

Recommended report sections

- Cover page

- Scope and rules of engagement

- Methodology summary

- Executive summary

- Risk overview and severity distribution

- Detailed findings

- Affected assets and service mapping

- Evidence appendix

- CVSS scoring and rationale

- Business impact mapping

- Remediation plan and ownership

- Retest status/results

- Appendix (references, exclusions, assumptions)

Section purpose table

| Section | Purpose | Common Mistake |

|---|---|---|

| Scope | Defines legal and technical boundaries | Vague target listing or missing exclusions |

| Methodology | Explains how testing was done | Tool list without process clarity |

| Executive Summary | Business-level risk snapshot | Too technical or too generic |

| Findings | Actionable technical risk details | Copy-pasted scanner output |

| Remediation | Fix plan with owners and sequence | “Apply best practices” style vagueness |

| Retest | Confirms whether risk still exists | Missing or delayed validation results |

4) Finding template you can reuse every time

Each finding should be structured identically to improve readability and triage speed.

Practical finding format

- Finding ID and title (behavior-focused)

- Affected asset, endpoint, or component

- Severity and CVSS vector/score

- Technical description (what was observed)

- Preconditions and tested role/context

- Evidence summary (safe, concise, reproducible)

- Business impact statement

- Remediation guidance (developer-ready)

- References (OWASP, MITRE, standards as relevant)

- Owner and SLA target

- Retest status and date

Example skeleton table

| Field | Example Format |

|---|---|

| Title | “Cross-Tenant Access Allowed on Invoice Detail Endpoint” |

| Asset | api.billing.example – GET /v2/invoices/{id} |

| Severity | High (CVSS score + vector documented) |

| Evidence | Redacted request/response pair and role comparison |

| Impact | Confidential invoice metadata exposure risk |

| Remediation | Enforce tenant ownership checks at API service layer |

| Retest | Pending / Passed / Failed with date |

5) Evidence quality standards for pentest reports

Evidence must prove the finding while minimizing risk and data exposure.

Evidence types to include

- Screenshots with meaningful context and redaction

- Request/response snippets with timestamps

- Log excerpts correlated to tested action

- Affected endpoint/path and environment details

- Reproduction summary at safe high level

Evidence quality table

| Evidence Type | Strong Evidence | Weak Evidence |

|---|---|---|

| Screenshot | Focused, redacted, includes relevant identifiers | Full-screen noise with sensitive data |

| Request/Response | Includes method, endpoint, role, and outcome | Partial payload without context |

| Timeline | Ordered events with UTC timestamps | Unordered notes with no time reference |

| Reproduction Summary | Steps and conditions described safely | “Issue reproduced” with no detail |

| Logs | Correlated event IDs and related system context | Generic log line without linkage |

Redaction rules to enforce

- Remove customer PII and secrets

- Mask tokens, API keys, session identifiers

- Keep enough context for technical validation

- Preserve original unredacted evidence securely for authorized internal review only

6) CVSS in pentest reporting: use it correctly

CVSS improves consistency when used transparently.

Practical CVSS guidance

- Always include vector string with score

- Keep base score technical and reproducible

- Separate business priority discussion from CVSS numeric value

- Document assumptions for uncertain metrics

CVSS interpretation notes

| CVSS Element | Reporting Use |

|---|---|

| Base Score | Standardized technical severity baseline |

| Vector | Explains scoring logic and reproducibility |

| Context Overlay | Adds business/operational urgency outside base score |

| Retest Status | Determines if scored risk is still active |

Do not inflate severity to force prioritization. Explain business impact clearly instead.

7) Weak vs strong report writing (required comparison)

| Weak Reporting Pattern | Strong Reporting Pattern |

|---|---|

| “Broken access control found.” | “Standard user can access admin-only billing export endpoint due to missing server-side role check.” |

| “Critical vulnerability in API.” | “High-severity API authorization gap allows cross-tenant record access under authenticated low-privilege context.” |

| “Fix authentication issue.” | “Invalidate active session tokens after password reset and enforce MFA check on account recovery endpoint.” |

| “Potential data breach risk.” | “Issue can expose customer order metadata in production if endpoint IDs are accessed without tenant ownership checks.” |

| “Patched by dev team.” | “Remediation deployed on 2026-04-18; retest confirms unauthorized role request now returns access denied.” |

Strong reporting is specific, reproducible, and tied to action.

8) Writing remediation developers can implement

Remediation should describe what to change and where to enforce it.

Remediation writing pattern

- State desired security control behavior

- Specify affected system layer (API gateway, service logic, auth middleware, etc.)

- Include sequencing if multiple fixes are needed

- Add validation criteria for retest

Remediation quality checklist

- Is there a clear owner team?

- Is scope of change defined?

- Is control location identified?

- Is retest condition explicit?

- Is timeline realistic with SLA?

Example remediation style

Bad: “Harden API authorization.”

Better: “Implement server-side tenant ownership verification in invoice retrieval service before data fetch; deny access when tenant ID in token does not match record ownership. Add integration test coverage for cross-tenant access attempts.”

9) Referencing OWASP and MITRE without overloading the report

References should improve clarity, not clutter findings.

Practical reference strategy

- Use OWASP categories for web/API weakness context

- Use MITRE ATT&CK references where behavior maps clearly to attack techniques

- Keep references concise and relevant to the specific finding

- Avoid reference dumping without explanation

Reference mapping table

| Reference Type | Best Use |

|---|---|

| OWASP Top 10 / API Top 10 | Application and API weakness classification |

| MITRE ATT&CK | Behavioral context for detection and response teams |

| Internal Standards | Policy and control accountability alignment |

| Compliance Controls | Audit and governance traceability |

10) Common reporting mistakes that reduce impact

- Vague impact statements without business context

- Findings dominated by scanner text rather than analyst reasoning

- No prioritization or owner assignment

- Missing retest commitments and status tracking

- Evidence that cannot be reproduced by engineering teams

- Overly long narratives with no action summary

- Severity inflation to force urgency

Fast guardrails

- Every finding must answer: what, where, why it matters, how to fix, who owns it

- Every severity must include CVSS vector and rationale

- Every critical/high finding must have explicit retest plan

11) Reusable pentest report checklist

Use this before final delivery.

| Checklist Item | Done |

|---|---|

| Scope and exclusions are explicit and approved | ☐ |

| Methodology reflects actual testing performed | ☐ |

| Executive summary highlights top business risks clearly | ☐ |

| Findings use consistent structure and IDs | ☐ |

| Evidence is redacted, timestamped, and reproducible | ☐ |

| CVSS score + vector documented for each finding | ☐ |

| Remediation steps are specific and owner-aligned | ☐ |

| References (OWASP/MITRE/policy) are relevant and concise | ☐ |

| Retest status and dates are included or scheduled | ☐ |

| Final QA review completed for clarity and consistency | ☐ |

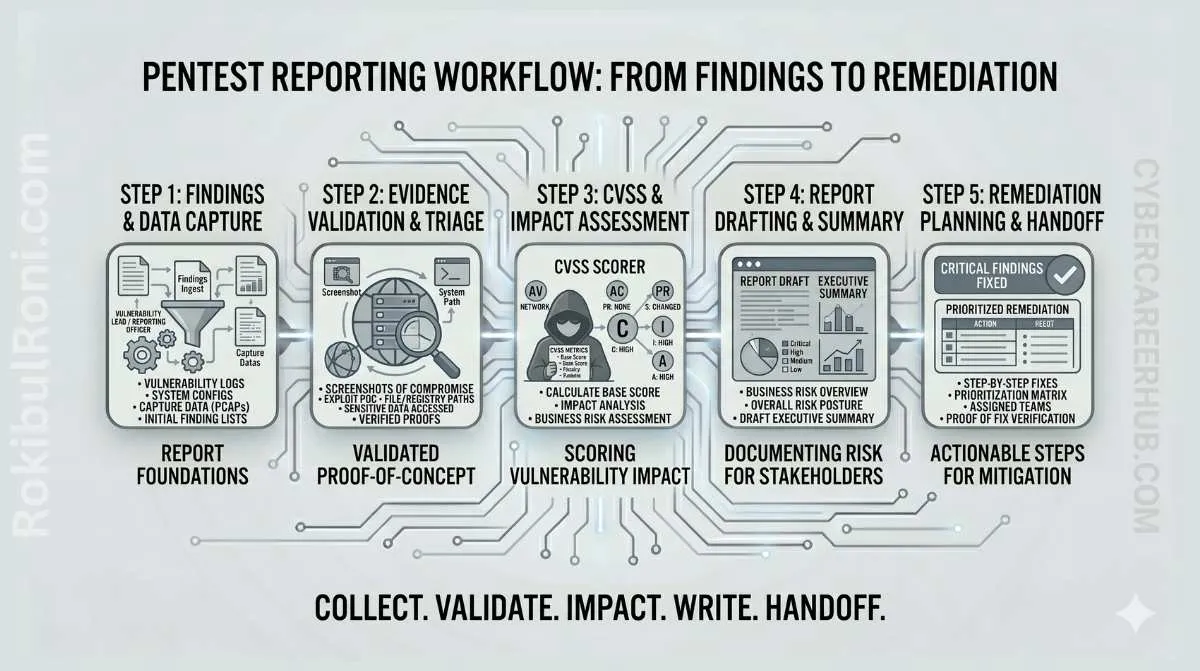

12) Practical report delivery workflow

Delivery sequence

- Internal QA review (technical accuracy + writing quality)

- Stakeholder pre-brief (high-risk themes and remediation priorities)

- Report release with version tracking

- Remediation kickoff with owner matrix

- Retest scheduling and closure workflow

Delivery artifact table

| Artifact | Audience | Purpose |

|---|---|---|

| Executive risk summary | Leadership | Decision support and prioritization |

| Technical findings report | Engineering/security | Detailed remediation execution |

| Remediation tracker | Team leads | Ownership and status visibility |

| Retest addendum | All stakeholders | Verify risk reduction and closure |

The best pentest report is not the longest one. It is the one that gets fixed quickly, retested clearly, and used to improve security decisions in the next cycle.

Reporting operations worksheet

| Workstream | Owner | First Action | Validation Signal |

|---|---|---|---|

| Template governance | Report lead | Enforce one finding format across engagements | Reduced report inconsistency |

| Evidence QA | Technical reviewer | Validate reproducibility before delivery | Fewer remediation clarification loops |

| Remediation alignment | Engineering liaison | Map findings to owner/team and control layer | Faster remediation start times |

| Retest closure | Security QA | Track closure states with proof references | More defensible closeout decisions |

Weekly reporting checklist

- Review open findings lacking clear owner/SLA

- Validate CVSS vectors for new high-impact issues

- Ensure business impact language remains specific and evidence-backed

- Confirm retest schedules for critical findings

Delivery and handoff pack

| Artifact | Minimum Content | Consumer |

|---|---|---|

| Executive summary brief | Top risks, impacted business functions, priorities | Leadership stakeholders |

| Technical remediation pack | Detailed findings and implementation actions | Engineering teams |

| Retest tracker | Status, date, evidence, residual risk notes | Security governance |

| Lessons register | Common root causes and recurring control gaps | Security program owners |

Quality checks

- Can each finding be acted on without follow-up clarification?

- Are remediation actions specific, scoped, and testable?

- Are closure states tied to clear retest evidence?

90-day pentest reporting maturity cadence

Days 1–30

- Standardize templates and QA criteria across report types

- Baseline report quality metrics (rework, closure delays, evidence gaps)

- Improve executive summary consistency

Days 31–60

- Tighten remediation language and owner mapping

- Reduce weak findings through peer review calibration

- Improve retest package completeness

Days 61–90

- Audit report quality against closure outcomes

- Publish recurring root-cause trend insights

- Update reporting playbook for next engagement cycle

| KPI | Why It Matters |

|---|---|

| Findings requiring rewrite | Indicates clarity and structure quality |

| Remediation kickoff lead time | Shows report usability for engineering |

| Retest-backed closure ratio | Measures true risk reduction validation |

| Recurring finding category trend | Reveals systemic control issues |

Reporting maturity increases when technical accuracy, communication quality, and remediation closure are managed as one continuous process.

Report quality assurance (QA) and acceptance criteria

Professional reports are consistent and reviewable. A simple QA pass catches most issues that frustrate engineering and leadership.

Finding acceptance checklist

- Title reflects the core issue (not the symptom).

- Clear scope context: component, environment, role.

- Evidence proves impact with minimal sensitive data exposure.

- CVSS scoring is consistent with the described impact and assumptions.

- Remediation guidance is specific, testable, and prioritized.

- Retest criteria define “fixed” in one sentence.

Evidence map template

| Finding ID | Evidence artifacts | Where stored |

|---|---|---|

FND-01 | request/response, screenshots, logs | 02-evidence/FND-01/ |

FND-02 | configuration excerpts, scan output | 02-evidence/FND-02/ |

Executive summary standards

- 3–5 top risks, each with business impact and a remediation theme.

- One sentence on scope and limitations.

- A short “what improved” section if this is a retest.

Delivery and closure discipline

- Provide a remediation brief to engineering (priorities + quick wins).

- Track remediation owners and due dates.

- Retest and close findings with before/after evidence.

This is what keeps pentest reporting professional: consistent acceptance criteria, strong evidence handling, and predictable closure.