API endpoints now carry login, billing, account recovery, document upload, workflow approvals, and integration logic that used to sit behind web pages. When those controls are weak, API issues usually become business issues very quickly: unauthorized data access, broken transaction controls, noisy outages, and compliance findings that engineering teams must fix under pressure.

This guide is a practical field checklist for authorized testing only. It focuses on method, evidence, communication, and remediation direction so testing improves security posture instead of generating one-off scanner output.

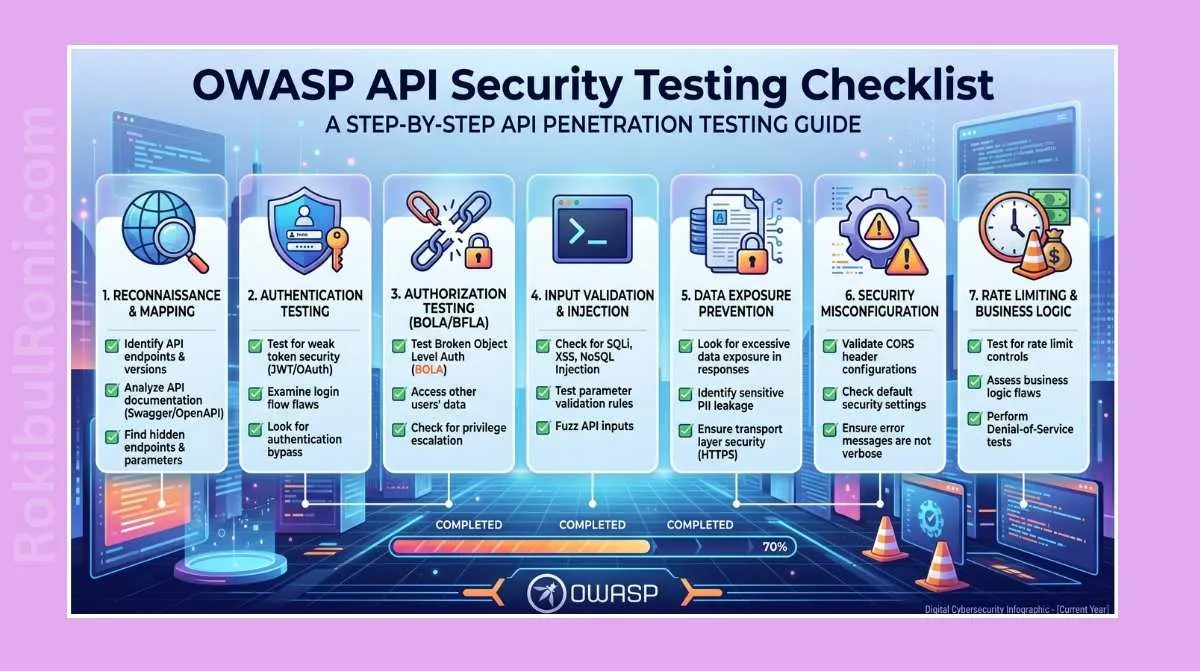

API Pentesting Checklist

Use this sequence for scoped internal assessments, client-approved engagements, and lab simulations.

1) Scope definition before touching traffic

If scope is unclear, everything that follows is noisy. Confirm scope in writing and keep it visible during testing.

Scope items to lock down

- In-scope base URLs and API gateways

- Environment boundaries (staging, pre-prod, production)

- Allowed HTTP methods and excluded routes

- Auth models in use (session, JWT, OAuth, API key, mTLS)

- User roles available for testing

- Rate-limit and traffic ceilings

- Business-critical flows (payments, password reset, approvals, account changes)

- Third-party dependencies and ownership boundaries

Scope clarification table

| Scope Area | What to Confirm | Why It Matters |

|---|---|---|

| API Surface | Base paths, versions, hostnames | Prevents out-of-scope scanning and duplicate effort |

| Identity Context | Test users for each role | Enables proper authorization validation |

| Data Rules | Non-production data use, redaction constraints | Avoids accidental exposure of sensitive records |

| Test Windows | Allowed time and maintenance windows | Reduces operational risk and alert fatigue |

| Traffic Limits | Requests per minute, burst limits | Prevents service degradation during testing |

| Escalation Path | Security contact and on-call owner | Speeds response if behavior looks suspicious |

2) Pre-test readiness checklist

A good API assessment starts with operational readiness, not tooling.

Mandatory pre-test controls

- Written authorization letter and approved rules of engagement

- Named technical and business contacts

- Test accounts for every required role

- API docs (OpenAPI/Swagger or equivalent)

- Known sample requests/responses

- Logging and monitoring contact for correlation

- Rollback and incident communication plan

- Confirmed backup and recovery state for the target environment

- Known excluded systems and partner APIs

Quick go/no-go gate

| Check | Status | Owner |

|---|---|---|

| Authorization and legal approval in place | ☐ | Security lead |

| Test identities created and validated | ☐ | IAM/app owner |

| Scope reviewed with engineering | ☐ | Project manager |

| Monitoring team informed | ☐ | SOC lead |

| Rollback/contact plan confirmed | ☐ | Ops lead |

If two or more checks are missing, pause and resolve before testing.

3) OWASP API Security Top 10 mapping (2023)

Use this map to avoid blind spots. Treat it as planning coverage, not a copy-paste report section.

| OWASP API Risk | What to Evaluate in Practice | Example Evidence |

|---|---|---|

| API1 Broken Object Level Authorization | Access controls on object IDs across users/tenants | Request/response pair showing improper object exposure in authorized test context |

| API2 Broken Authentication | Session/token handling, credential lifecycle, token invalidation | Auth flow notes, token lifetime observations, logout behavior evidence |

| API3 Broken Object Property Level Authorization | Overexposed fields, hidden properties, response filtering | Comparison of role-based responses showing excess sensitive fields |

| API4 Unrestricted Resource Consumption | Rate limits, payload bounds, pagination controls | Controlled burst test logs and resulting API behavior |

| API5 Broken Function Level Authorization | Privileged endpoint access by low-privilege roles | Role matrix with endpoint/method access outcomes |

| API6 Unrestricted Access to Sensitive Business Flows | Abuse potential in high-impact workflows | Business-flow review notes with approval-step validation |

| API7 SSRF | Server-side URL fetch behavior and allow/deny controls | Validation notes from approved test URLs and server responses |

| API8 Security Misconfiguration | Verbose errors, weak headers, default configs | Error sample and security header baseline |

| API9 Improper Inventory Management | Shadow/legacy versions, undocumented endpoints | API inventory mismatch report (docs vs observed routes) |

| API10 Unsafe Consumption of APIs | Third-party API trust and response validation | Integration flow notes and failure-handling checks |

4) Core testing checklist by control area

Keep this section as your working matrix during execution.

Authentication

- Verify token issuance and revocation behavior

- Confirm expired/invalid token handling is consistent

- Check password reset and account recovery flow protections

- Validate MFA-related API behavior where applicable

Authorization (object-level and function-level)

- Compare access results across role accounts for same endpoints

- Validate tenant isolation in multi-tenant APIs

- Check state-changing endpoints for privilege boundaries

- Confirm admin routes are not reachable by standard roles

Input validation and data handling

- Validate schema enforcement for required/optional fields

- Test type handling, boundary handling, and parser resilience

- Confirm server-side validation does not rely on client hints

- Review rejection behavior for malformed payloads

Mass assignment and property controls

- Identify writable vs read-only properties

- Check whether sensitive fields can be modified unexpectedly

- Validate strict allowlist handling on update endpoints

Rate limiting and resource controls

- Validate per-user, per-IP, and per-token limits where designed

- Check lockout/backoff behavior on sensitive endpoints

- Confirm large payload and pagination safeguards

Sensitive data exposure and error handling

- Inspect responses for unnecessary PII, secrets, internal paths

- Verify stack traces and debug details are suppressed

- Confirm consistent error envelopes for failed requests

File upload, CORS, headers, and logging

- Validate file type, size, and storage restrictions

- Review CORS policy against allowed origins and methods

- Check headers relevant to API hardening (context-dependent)

- Confirm security events are logged with enough context for triage

5) Tool stack and workflow pairing

Use tools as validation instruments, not as a substitute for thinking.

| Tool | Best Use in API Assessments | Operator Note |

|---|---|---|

| Burp Suite | Intercepting, replaying, comparing role-based request behavior | Keep project scope strict and label evidence as you go |

| OWASP ZAP | Baseline checks and additional passive analysis | Use as supplementary coverage, validate findings manually |

| Postman | Auth flow testing, environment-based request collections | Maintain separate collections per role/environment |

| Browser DevTools | Capturing frontend-to-API behavior and token flow context | Useful for tracing undocumented API calls |

| Nmap (authorized support services) | Verifying exposed API-adjacent services in scope | Restrict to approved hosts and ports only |

| ffuf (scoped discovery) | Controlled discovery of likely endpoints/routes | Use only against explicitly approved base paths |

| Python scripts (custom validation) | Repeatable checks for schema/role/response consistency | Version-control scripts and attach output snapshots |

Practical workflow order

- Build endpoint inventory from docs + observed traffic.

- Build role matrix and prepare request baselines.

- Validate authentication and session behavior.

- Validate authorization across object/function/property levels.

- Validate input handling, resource controls, and error behavior.

- Validate logging visibility with SOC/engineering contacts.

- Consolidate evidence and map each issue to remediation.

6) Evidence-first review table

Use this table during execution so report writing becomes easier later.

| Test Area | What to Review | Evidence to Capture | Remediation Direction |

|---|---|---|---|

| Authentication | Token lifecycle, session invalidation, MFA-relevant APIs | Auth request sequence, status codes, lifecycle timeline | Harden token policies, improve invalidation and session controls |

| Authorization | Cross-role/tenant access to objects and actions | Role comparison matrix, request/response diffs | Enforce server-side RBAC/ABAC checks per endpoint |

| Object Access Control | ID-based data access boundaries | Object access attempts across role/test accounts | Add object ownership and tenant checks at service layer |

| Function Access Control | Privileged operation protections | Endpoint-method-role access table | Restrict privileged routes and validate role claims |

| Input Validation | Type/bounds/schema handling | Rejected payload samples and error consistency notes | Centralize validation and strict schema enforcement |

| Mass Assignment | Unexpected writable fields | Before/after object snapshots per update request | Implement allowlist-based property binding |

| Rate Limiting | Burst behavior and abuse resistance | Timed request logs, threshold behavior screenshots | Apply adaptive limits and cooldown policies |

| Sensitive Data Exposure | Response minimization and data leakage | Sanitized responses showing overexposed fields | Minimize response fields and enforce data classification |

| Error Handling | Verbose diagnostics, stack traces | Error corpus with endpoint correlation | Standardize safe error responses and internal logging |

| File Upload | Type/size/content/storage controls | Upload behavior records and rejection evidence | Add strict validation and isolated storage policy |

| CORS and Headers | Origin/method policy and header posture | Header snapshots and CORS behavior notes | Narrow origin policy and enforce secure defaults |

| Logging and Monitoring | Security event visibility and traceability | Event IDs, timestamps, correlation screenshots | Improve audit fields, alert quality, and retention alignment |

7) Findings that engineering and leadership can act on

A technically correct finding that lacks business framing is usually ignored. Standardize a finding format so each issue is actionable.

Finding template (practical)

- Finding title (specific and behavior-based)

- Severity and CVSS score/vector

- Affected endpoint(s) and method(s)

- Preconditions and tested role(s)

- Proof summary (safe, concise, non-destructive)

- Business impact in plain language

- Remediation guidance with ownership hints

- Retest status and date

CVSS usage notes for API findings

- Keep CVSS technical; do not mix business priority directly into base scoring.

- Add contextual business impact separately (data sensitivity, public exposure, critical workflow impact).

- If uncertainty exists, state assumptions explicitly in the finding.

Example finding structure

| Field | Example (Format Only) |

|---|---|

| Title | ”Order Detail Endpoint Allows Cross-Tenant Data Access” |

| Severity | High (CVSS: 8.1, vector documented) |

| Endpoint | GET /api/v2/orders/{orderId} |

| Affected Roles | Standard authenticated user |

| Proof Summary | Controlled test account accessed records outside assigned tenant context |

| Business Impact | Potential exposure of customer order metadata and confidentiality risk |

| Remediation | Enforce tenant ownership checks at API service layer before data fetch |

| Retest Status | Pending remediation validation |

8) Common mistakes that weaken API pentesting

- Treating API testing as only automated scanning

- Testing one role and assuming authorization is covered

- Skipping business-flow abuse checks on high-impact endpoints

- Ignoring undocumented or legacy API versions

- Capturing poor evidence that cannot support remediation

- Reporting vague impact without affected endpoints and owners

- Running noisy tests without coordinating with monitoring teams

- Mixing out-of-scope assets into final reports

9) Turning one assessment into a repeatable security program

A strong API testing practice is cyclical: inventory, test, remediate, retest, and improve detection.

Program cadence (practical)

| Phase | Outcome | Suggested Frequency |

|---|---|---|

| API Inventory Review | Updated endpoint/version ownership map | Monthly |

| Risk-Based Testing Sprint | Focused tests on high-impact flows | Quarterly or per major release |

| Remediation Review | Verified fix progress by owner | Bi-weekly during active remediation |

| Retest Window | Validation of fixed findings | After remediation milestones |

| Detection Feedback Loop | New monitoring and alert improvements | After each assessment cycle |

Operational metrics worth tracking

- Findings by severity and control area

- Mean time to remediate API findings

- Retest pass rate

- Recurring issue categories by team/service

- Coverage percentage of critical business APIs

The best API assessments leave behind more than a report: cleaner authorization design, better logging, stronger release gates, and security teams that can prove risk reduction over time.

Operational worksheet for implementation teams

Use this worksheet to convert the guidance above into repeatable execution tasks across security, engineering, and operations.

| Workstream | Owner | First Action | Validation Signal |

|---|---|---|---|

| Scope governance | Security lead | Publish and review scoped asset list | No out-of-scope test activity in evidence logs |

| Identity/role coverage | IAM + app owner | Build role matrix for all critical endpoints | Role-based test results captured per endpoint |

| Evidence quality | Pentest lead | Standardize evidence naming/timestamp format | Every finding has reproducible artifact links |

| Remediation workflow | Engineering manager | Assign owners and due dates per finding | Remediation tracker updated weekly |

| Retest discipline | Security QA owner | Schedule retest windows before closure | Retest status present for all high-risk findings |

| Detection feedback | SOC lead | Map findings to alert/use-case updates | New/updated detections after each assessment cycle |

Implementation checklist

- Define a shared test calendar with engineering and SOC visibility

- Store request/response evidence in a centralized structured repository

- Enforce finding templates so all reports have consistent fields

- Track recurring weaknesses by service/team, not only by single issue

- Add a closure gate requiring retest evidence for critical findings

Artifact and handoff pack standard

Assessments become more valuable when handoff artifacts are predictable and complete.

| Artifact | Minimum Content | Consumer |

|---|---|---|

| Scope pack | Approved targets, exclusions, time windows, contacts | Security + engineering |

| Test log | Endpoint, role, objective, timestamp, outcome | Pentest + audit stakeholders |

| Finding package | CVSS, business impact, remediation, owner, SLA | Engineering + leadership |

| Retest package | Before/after evidence and status change notes | Security governance |

| Detection notes | SIEM/logging improvement opportunities | SOC/detection engineering |

Handoff quality checks

- Can an engineer reproduce each issue from documented evidence?

- Is business impact written in plain language for non-technical readers?

- Are remediation actions specific enough to implement without rework?

- Are closure decisions tied to verifiable retest results?

90-day execution cadence

Days 1–30

- Standardize scope intake, evidence format, and finding templates

- Run one full risk-based API assessment on critical business flows

- Build a remediation tracker with owner/SLA fields

Days 31–60

- Complete remediation reviews for highest-risk findings

- Execute retest cycle and update closure states

- Feed observed gaps into API secure-development checklists

Days 61–90

- Repeat scoped assessment on changed services/releases

- Compare metrics (severity distribution, MTTR, retest pass rate)

- Publish program-level lessons and next-quarter priorities

| Program Metric | Why It Matters |

|---|---|

| High-risk finding recurrence rate | Shows whether controls are becoming durable |

| Mean time to remediate | Indicates operational remediation efficiency |

| Retest pass percentage | Validates fix quality, not only deployment speed |

| Detection improvement count | Confirms assessments strengthen defense outcomes |

Teams that run API testing as a quarterly operating rhythm, not a one-time deliverable, typically see faster remediation, better release quality, and stronger cross-team security trust.