The goal of a penetration test is to reduce risk, not create it. Strong validation proves a finding is real and helps engineers fix it, but reckless validation can cause outages, data corruption, or legal incidents.

This guide covers how to validate findings safely and ethically during authorized assessments. It is for controlled testing only and must not be used for live systems without explicit, written approval.

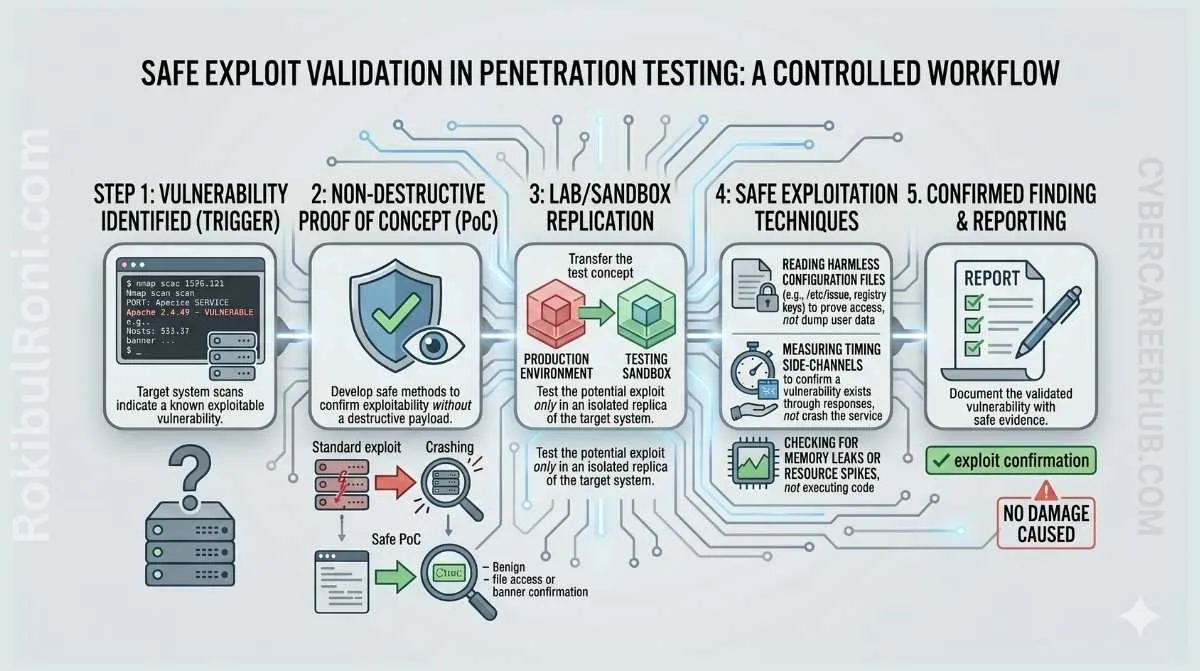

Exploit validation without causing damage

Use this workflow to produce credible evidence while protecting client systems and data.

1) Why validation matters

- False-positive reduction: proves a scanner alert is a real, exploitable issue.

- Business impact clarity: shows what can actually happen, not just what a tool claims.

- Remediation confidence: gives engineering teams clear, reproducible proof to build and test fixes against.

Validation turns a theoretical issue into an actionable engineering task.

2) The principle of minimum necessary proof

The best proof is the least invasive evidence that still confirms the finding. The goal is to demonstrate risk without realizing it.

Proof hierarchy (safest to riskiest)

- Configuration proof: a setting, policy, or line of code that is insecure.

- Read-only evidence: retrieving a harmless system identifier or test record.

- Controlled state change: creating or modifying a test record owned by the assessment team.

- Harmless callback: triggering a connection to a trusted, isolated lab system.

- Log correlation: performing an action and confirming it appears in target system logs.

Avoid any action that modifies shared data, affects other users, or disrupts service availability unless explicitly approved for a specific, time-boxed test.

3) Rules of engagement and client approval

Safe validation is impossible without clear, written rules.

Mandatory pre-validation checks

- Is the target system explicitly in scope?

- Is the proposed validation method approved in the rules of engagement?

- Is the test window confirmed with the system owner?

- Is there a rollback and communication plan if something goes wrong?

- Has the client approved the specific data or system interaction?

If any answer is “no” or “unclear,” stop and get written clarification before proceeding. Verbal approval is not enough.

4) Safe validation patterns for common findings

| Finding Type | Safe Validation Pattern | Risky Action to Avoid |

|---|---|---|

| Information Disclosure | Retrieve a system hostname, version number, or test file. | Retrieving user data, configuration secrets, or application code. |

| Broken Access Control | Use a low-privilege test account to view a test record owned by a high-privilege test account. | Accessing, modifying, or deleting real user or application data. |

| SQL Injection (Read) | Extract the database version, user, or current database name. | Dumping table names, user credentials, or customer records. |

| Command Injection | Use whoami, id, or hostname to confirm execution context. | Running rm, reboot, or any command that modifies the file system or state. |

| Cross-Site Scripting (XSS) | Use a non-persistent alert() or prompt() in your own test browser session. | Storing XSS payloads that affect other users; defacing pages. |

| Server-Side Request Forgery (SSRF) | Request a harmless internal URL from a dedicated lab server you control. | Targeting internal production services, cloud metadata endpoints, or sensitive admin interfaces. |

5) When not to exploit

Knowing when to stop is as important as knowing how to proceed.

Do not exploit if:

- The system is a fragile production environment.

- The scope or authorization is unclear.

- The target contains sensitive health, financial, or personal data.

- It is a safety-critical or industrial control system.

- The asset is owned by a third party.

- You lack a specific, written approval for that exact test.

In these cases, report the finding based on configuration review, code analysis, or log evidence, and document the validation limits.

6) Decision table for validation and retesting

Use this table to plan evidence collection and subsequent retest methods.

| Finding Type | Safe Evidence | Risky Evidence to Avoid | Retest Method |

|---|---|---|---|

| Web/API | |||

| Broken Object Level Authorization | Request/response diffs between roles | Accessing real cross-tenant data | Re-run role-based tests against fixed endpoint |

| Insecure Direct Object Reference | Accessing test records with predictable IDs | Cycling through IDs to find real user data | Verify server-side authorization check is now present |

| Cross-Site Scripting (Reflected) | Screenshot of alert() in tester’s browser | Storing payloads or affecting other users | Re-submit payload and confirm it is encoded/blocked |

| Infrastructure | |||

| Missing Patches | Nessus/Nmap version scan output | Running a public exploit module | Re-scan and confirm patch is applied and version updated |

| Weak TLS Configuration | SSL Labs/testssl.sh report | Downgrade attacks against live traffic | Re-run configuration scan and verify score/protocol |

| Cloud | |||

| Overly Permissive IAM Role | IAM policy document JSON | Using the role to access out-of-scope data | Re-analyze the policy and confirm permissions are reduced |

| Public S3 Bucket | Listing bucket contents (if approved) | Reading/writing sensitive files | Re-check bucket ACL/policy and confirm it is private |

7) How defensive experience improves validation

A background in incident response, forensics, or SOC analysis makes for a safer offensive tester.

- SIEM/Log-friendly tests: You know what a good detection signal looks like and can perform actions that are easy for the blue team to trace and validate.

- Evidence preservation: You understand chain of custody and how to document artifacts in a forensically sound manner.

- Clear remediation: You can provide remediation advice that aligns with how defensive tools and processes actually work.

8) Common mistakes in exploit validation

- Chasing impact too far: causing a denial of service or data integrity issue just to get a “critical” finding.

- Touching real customer data: turning a theoretical risk into a real data breach.

- Ignoring rate limits: triggering blocks or causing performance degradation.

- Failing to document approval: proceeding with a risky test based on a verbal “OK.”

- Using public exploit code without review: running unvetted code that may have unintended side effects.

9) Safe validation checklist for every finding

Before attempting to validate any exploit, run through this final gate.

| Check | Status |

|---|---|

| Is this system and method explicitly in scope and approved in writing? | ☐ |

| Have I selected the minimum necessary proof to confirm the finding? | ☐ |

| Am I using a test account and test data only? | ☐ |

| Have I confirmed the test window with the system owner? | ☐ |

| Is there a documented contact and rollback plan? | ☐ |

| Have I reviewed my actions to ensure they do not affect other users or services? | ☐ |

If any box is unchecked, pause and seek clarification. The goal is to provide clear, actionable proof of risk, not to become a risk yourself.

10) Approval and evidence log template for high-risk validations

When teams run multiple findings in parallel, approvals and evidence can become fragmented. Use a single validation log so legal, technical, and reporting decisions stay traceable.

| Field | What to Record |

|---|---|

| Finding ID | Unique finding reference from report draft |

| Requested Validation Method | Exact method proposed (read-only, controlled change, log correlation, etc.) |

| Approval Owner | Name and role of approving authority |

| Approval Timestamp | Date/time approval was granted |

| Scope Confirmation | Explicit affected assets/endpoints/environments |

| Risk Notes | Known operational concerns before execution |

| Evidence Reference | Screenshot/log/request IDs linked to storage location |

| Outcome | Confirmed / Not Confirmed / Partial |

| Retest Required | Yes/No + owner |

This log becomes the bridge between technical validation and client-safe communication.

11) Retest playbook after remediation

Retest quality is part of validation quality. A finding is not closed because a patch was deployed; it is closed when safe evidence shows risk behavior is no longer reproducible.

Retest sequence

- Confirm remediation scope and implementation owner.

- Re-run the original safe validation pattern first.

- Verify expected denial/control behavior under same role/context.

- Check for side effects in logs and user workflow.

- Update finding status with timestamped retest evidence.

Retest status model

| Status | Meaning |

|---|---|

| Passed | Original risk behavior no longer reproducible |

| Partial | Some controls fixed, residual exposure remains |

| Failed | Original risk behavior still reproducible |

| Deferred | Retest blocked by change window/scope constraints |

Safe exploit validation is strongest when approval records, evidence quality, and retest outcomes are all documented as one continuous workflow.

Operational controls for safe validation teams

| Workstream | Owner | First Action | Validation Signal |

|---|---|---|---|

| Approval governance | Engagement lead | Standardize written validation approval format | All risky tests tied to explicit approval record |

| Safety boundaries | Technical lead | Define prohibited actions by environment class | Zero service disruption from validation tests |

| Evidence discipline | Tester + reviewer | Enforce minimum proof artifact checklist | Reproducible evidence in all major findings |

| Retest control | Security QA | Link retest outcomes to original proof IDs | Closure decisions are traceable and defensible |

Team guardrails

- Never escalate proof method without explicit updated approval

- Always prefer read-only or minimally invasive validation first

- Pause testing immediately when unexpected instability appears

- Log every high-risk action with timestamp and operator identity

Client-facing validation communication template

| Communication Point | Recommended Content |

|---|---|

| Validation objective | What is being confirmed and why |

| Safety method | How impact is minimized |

| Operational risk note | What can go wrong and how it is mitigated |

| Evidence expectation | What artifacts will be captured |

| Stop condition | When testing is halted and escalated |

Why this helps

- Reduces misunderstanding about test intent

- Aligns legal, technical, and business stakeholders

- Prevents scope drift under incident-like pressure

90-day safe-validation improvement plan

Days 1–30

- Deploy standard approval + evidence log templates

- Run internal review on recent validation quality

- Define environment-based prohibited action matrix

Days 31–60

- Improve retest tracking and closure state consistency

- Conduct tabletop for high-risk validation decision paths

- Align validation artifacts with reporting templates

Days 61–90

- Audit approval-to-evidence traceability across engagements

- Publish recurring validation mistakes and control updates

- Measure reduction in validation-related operational risk events

| KPI | Why It Matters |

|---|---|

| Validations with complete approval records | Measures legal/ethical control maturity |

| Evidence reproducibility rate | Indicates reporting strength |

| Retest closure quality score | Confirms remediation validation quality |

| Operational incident count during tests | Tracks safety-first execution effectiveness |

Safe exploit validation matures fastest when teams operationalize approvals, evidence quality, and retest governance as one integrated practice.

Validation guardrails toolkit (for professional engagements)

If you want validation to be both credible and safe, treat it like a controlled change with explicit decision gates.

1) Approval checklist (minimum bar)

- Written authorization: scope + method + time window are documented.

- System owner present: a named contact is available during the test.

- Rollback ready: you know what “undo” looks like before any step.

- Data boundaries set: what you may access, store, and include in the report is specified.

- Stop conditions defined: when you will halt validation and escalate.

2) Evidence rubric (what “good” looks like)

| Evidence Element | Standard |

|---|---|

| Reproducibility | Another tester can reproduce from your notes in < 15 minutes |

| Minimal impact | Proof does not require destructive actions or cross-tenant access |

| Traceability | Artifact references map to exact request/response/log entries |

| Context | Includes the role/account used and the exact target component |

| Remediation utility | Clear engineering action is possible without extra back-and-forth |

3) Safe validation decision matrix

Use this as the “last check” before executing a validation step.

| Question | If the answer is “no”… |

|---|---|

| Can the proof be produced read-only? | Switch to configuration/code/log evidence instead |

| Can the action be isolated to a test account and test data? | Redesign validation or stop |

| Would this action be unacceptable during peak hours? | Move to an approved window or stop |

| Can you explain the action to a system owner in one sentence? | You likely don’t have sufficient clarity |

4) Retest closure standard

- Retest the same path used to validate (same endpoint, same role boundaries).

- Capture “before/after” evidence with timestamps.

- Record the control that changed (authorization check, input handling, policy reduction).

- Close only when the issue is no longer exploitable and the fix does not break legitimate functionality.

This toolkit keeps exploit validation professional-grade: explicit approvals, minimal-impact proof, and defensible closure.