Burp Suite testing notes become valuable when they stay focused on repeatable process, clean evidence, and scope discipline. In real assessments, the difference between useful findings and noise usually comes from how the project is prepared before any request replay starts.

These notes are for authorized testing only: approved client engagements, internal assessments, and controlled lab environments.

Burp Suite Testing Notes

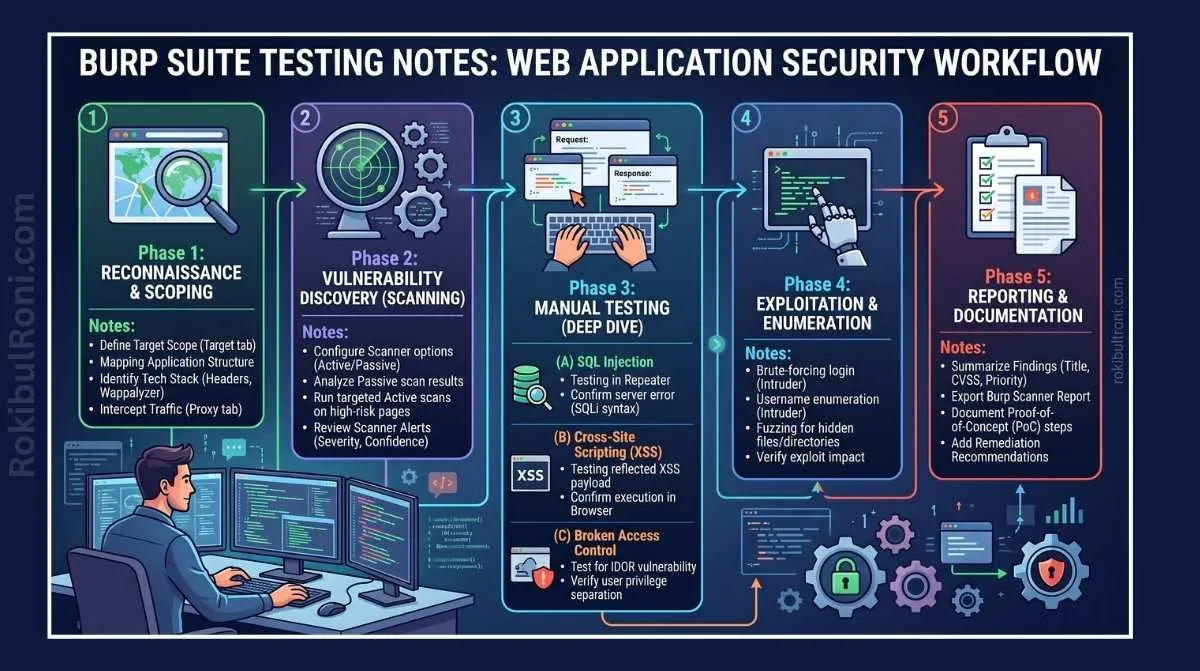

Use this as a practical workflow that keeps testing methodical and report-ready.

1) Project setup that prevents chaos later

A rushed setup leads to mixed scope traffic, weak notes, and findings that cannot be reproduced.

Clean setup checklist

- Dedicated browser profile for the engagement only

- Burp proxy configured and verified with test traffic

- Scope rules defined before crawling or interception

- Target map aligned to approved domains and paths

- Site map cleanup plan (label in-scope, out-of-scope, unknown)

- Notes workspace prepared (per endpoint and per role)

- Issue tracking format decided before active validation

Setup verification table

| Setup Area | What to Confirm | Evidence to Keep |

|---|---|---|

| Browser Profile | No cached credentials from unrelated sessions | Screenshot of clean profile configuration |

| Proxy Routing | Requests are consistently captured | Proxy history sample from known endpoint |

| Scope Rules | Include/exclude rules match authorization document | Scope rule export or screenshot |

| Target Tree Hygiene | In-scope assets clearly tagged | Annotated target tree snapshot |

| Note Structure | Endpoint, role, and test objective fields defined | Sample note template used by tester |

2) Manual testing areas to cover in every web app assessment

Automation can help with coverage, but the high-value issues usually come from manual reasoning.

Authentication and session management

- Validate login, logout, and session invalidation behavior

- Check token/cookie handling consistency across app states

- Review account recovery and session renewal flows

- Confirm role transitions do not inherit stale privileges

Access control checks

- Compare same action across low, medium, and privileged roles

- Validate server-side enforcement on sensitive routes

- Review object-level access boundaries in user-owned resources

- Check function-level restrictions for admin capabilities

Input and business logic validation

- Observe input handling and response normalization behavior

- Check server-side validation consistency across similar endpoints

- Review workflow constraints (approval steps, state transitions)

- Validate API requests initiated by web UI behavior

File upload and error handling

- Verify type, size, and metadata handling on upload flows

- Confirm rejection behavior is predictable and non-verbose

- Check that error responses avoid stack traces/internal details

Keep each test linked to a specific endpoint, role, and business action. That one habit saves report-writing time later.

3) Safe usage notes for core Burp features

Use Burp modules as instruments for controlled validation, not for noisy spraying.

| Burp Feature | Testing Objective | Evidence Captured |

|---|---|---|

| Proxy | Observe real application traffic and flow logic | Baseline request/response history and flow sequence notes |

| Repeater | Re-run and compare specific requests safely | Before/after request comparisons with role context |

| Intruder (high-level controlled use) | Validate request pattern handling under approved limits | Parameter variation results and response pattern summary |

| Decoder | Inspect and normalize encoded values for analysis | Decoding notes tied to request ID |

| Comparer | Identify meaningful differences between responses | Side-by-side diff snapshots |

| Logger / HTTP history | Maintain reproducible timeline of tested actions | Timestamped request IDs mapped to findings |

| Organizer (or note workflow equivalent) | Keep test objectives, evidence, and outcomes aligned | Structured issue notes per endpoint and control area |

Safe operating guardrails

- Stay inside approved scope and test windows

- Keep request rates conservative unless explicitly approved

- Avoid destructive interactions with production data

- Stop and notify contact points if instability appears

4) Evidence capture without damaging systems

Most report quality issues are evidence quality issues.

Evidence collection checklist

- Capture request and response pairs with timestamps

- Record user role and environment for every key test

- Redact secrets, personal data, and sensitive identifiers

- Keep screenshots readable but minimal (focus on proof)

- Tie each evidence item to a finding ID or note reference

- Keep raw and summarized evidence separate

Evidence quality table

| Evidence Type | Good Practice | Weak Practice |

|---|---|---|

| Request/Response Proof | Includes method, endpoint, role, timestamp, outcome | Missing role context or partial response only |

| Screenshots | Highlights relevant sections with redaction | Full-screen clutter with sensitive data exposed |

| Notes | States objective, action, result, and interpretation | Vague one-line comments without context |

| Timeline | Sequence of test steps is reproducible | Events are out of order and cannot be replayed |

5) Combining Burp with other tools

Burp is strongest when connected to other validation and context tools.

| Tool | Why Pair It with Burp | Practical Output |

|---|---|---|

| OWASP ZAP | Additional passive checks and alternate parser behavior | Secondary validation signals for manual review |

| Browser DevTools | Frontend logic and API call tracing | Better endpoint discovery and request context |

| Postman | Structured API collections across roles/environments | Cleaner role-based API verification sets |

| Nmap (authorized support scope) | Service-level context around target infrastructure | Confirmed exposed service baseline for test planning |

Workflow pairing order

- Use browser + Burp Proxy to map real user flows.

- Use Repeater for focused validation of observed requests.

- Use Postman for repeatable multi-role API checks.

- Use ZAP as supplementary signal, then verify manually.

- Use authorized Nmap outputs to refine exposure context.

6) Turning observations into CVSS-backed findings

A finding is useful only when it is technically clear and business-relevant.

Practical finding structure

- Title that describes behavior, not only vulnerability class

- Affected endpoint/path and request method

- Tested role(s) and prerequisite conditions

- Severity with CVSS vector and score

- Proof summary using non-destructive evidence

- Business impact in plain language

- Remediation direction with ownership suggestion

- Retest status and date

CVSS note discipline

- Keep base score technical and reproducible.

- Add business context separately (asset criticality, data class, exposure).

- If assumptions exist, state them clearly in the finding.

Sample finding format table

| Field | Example Format |

|---|---|

| Title | ”Privilege Check Missing on Account Management Action” |

| Endpoint | POST /account/role-update |

| Severity | Medium/High with documented vector |

| Proof Summary | Controlled role test shows unauthorized action path |

| Business Impact | Unauthorized workflow changes may affect account integrity |

| Remediation | Enforce server-side role check before state-changing logic |

| Retest | Pending / Passed / Failed with date |

7) Common mistakes in Burp-driven assessments

- Running noisy automated scans without first defining scope boundaries

- Keeping one mixed project file across multiple targets and environments

- Testing only one user role and assuming access control is complete

- Missing API requests because browser and Burp notes are not correlated

- Collecting screenshots without request/response IDs

- Writing findings directly from tool output without manual validation

- Ignoring rate limits and operational constraints during live testing

- Delaying note-taking until the end of the engagement

8) Field-ready checklist for each engagement day

| Checkpoint | What “Good” Looks Like | Done |

|---|---|---|

| Scope Integrity | Only authorized hosts/routes appear in current project | ☐ |

| Role Coverage | At least two roles tested on critical workflows | ☐ |

| Evidence Hygiene | Every major observation has request ID + timestamp | ☐ |

| Findings Drafting | Candidate findings include impact + remediation direction | ☐ |

| Coordination | Monitoring/contact channel updated for test window | ☐ |

| Retest Readiness | Fix validation plan documented before handoff | ☐ |

Burp delivers the most value when it supports disciplined thinking: clean scope control, controlled validation, clear evidence, and reporting that engineers can act on immediately.

9) Burp note taxonomy for faster reporting

One of the biggest quality upgrades in manual testing is a note system that mirrors report structure.

Practical note tags

AUTH: authentication/session behavior observationsAUTHZ: access control and role boundary findingsINPUT: validation and parser behavior notesBL: business-logic flow issuesAPI: API-specific request/response behaviorEVID: evidence references ready for report inclusion

Note-to-report mapping table

| Note Tag | Report Section Target |

|---|---|

| AUTH / AUTHZ | Finding technical description + impact |

| INPUT / BL | Root-cause and remediation guidance |

| API | Affected endpoint matrix |

| EVID | Evidence appendix and retest section |

This keeps report drafting from becoming a separate, slow post-engagement task.

10) Retest coordination checklist for Burp projects

Retest work is usually where teams lose evidence continuity. Keep original and retest artifacts linked.

| Retest Step | Required Artifact |

|---|---|

| Original finding reference | Finding ID + original request ID |

| Fix confirmation | Change owner and deployment window |

| Revalidation request | Same endpoint/method/role context |

| Outcome proof | Updated response behavior + timestamp |

| Closure note | Passed/partial/failed with rationale |

When Burp project structure, notes, and retest artifacts stay aligned, final reporting quality improves without extra tooling complexity.

Operational worksheet for Burp-driven engagements

| Workstream | Owner | First Action | Validation Signal |

|---|---|---|---|

| Scope hygiene | Tester lead | Lock include/exclude rules before active testing | No off-scope requests in final project history |

| Note structure | Engagement tester | Apply consistent tag taxonomy and IDs | Findings map cleanly to note references |

| Evidence quality | QA reviewer | Enforce request/response + timestamp requirements | Every high-risk issue has reproducible artifacts |

| Coordination | Project manager | Notify SOC/ops before high-volume tests | Reduced false incident escalations |

| Retest workflow | Security lead | Link fix tickets to Burp request IDs | Closure decisions backed by retest artifacts |

Daily execution checklist

- Confirm project scope before each testing block

- Record role context for every significant observation

- Label potential findings early, not only during report writing

- Capture clean evidence while testing, not after memory decay

- Sync high-risk observations with owners the same day

Evidence and reporting handoff bundle

| Artifact | Minimum Content | Consumer |

|---|---|---|

| Burp project snapshot | Scoped history with labeled key requests | Pentest QA + engineering |

| Finding worksheet | Title, endpoint, role, impact, remediation notes | Report writers + technical owners |

| Retest set | Original and updated responses with status notes | Security governance |

| Lessons log | Repeated testing gaps and process improvements | Team lead + practice manager |

Handoff quality checks

- Can findings be reproduced from saved request IDs alone?

- Are remediation notes tied to concrete control points?

- Are retest outcomes linked to exact fix context?

90-day Burp workflow improvement plan

Days 1–30

- Standardize project setup template and note taxonomy

- Run internal QA review on one full engagement dataset

- Fix recurring evidence quality issues

Days 31–60

- Add role-based testing coverage targets by app type

- Improve API capture correlation between browser and Burp

- Reduce report drafting time through stronger note mapping

Days 61–90

- Operationalize retest pack standards across all engagements

- Track issue recurrence categories and testing blind spots

- Publish playbook updates based on observed patterns

| Metric | Why It Matters |

|---|---|

| Reproducible findings ratio | Indicates report-ready evidence quality |

| Retest closure cycle time | Reflects end-to-end engagement efficiency |

| Scope violations per engagement | Measures testing discipline |

| Report rewrite requests | Signals communication and structure quality |

Disciplined Burp usage becomes a force multiplier when execution notes, evidence, and retest decisions follow one consistent operating model.

Burp engagement notes pack (professional evidence standards)

If you want your Burp work to translate cleanly into reports and retests, standardize your notes and artifacts.

Folder structure that scales

00-scope-roes/(approved targets, constraints, test windows)01-session-notes/(daily log, hypotheses, decisions)02-evidence/(per-finding: requests, responses, screenshots)03-retests/(before/after proof, dates, versions)

Naming conventions

| Artifact | Example | Why |

|---|---|---|

| Request/response | FND-03_idor_getOrder.req.txt | Traceability in report and retest |

| Screenshot | FND-03_browser_proof.png | Immediate human verification |

| Export | 2026-02-05_project.burp | Reproducibility and handoff |

Evidence checklist per finding

- Exact URL/path and environment.

- Role/account used (test accounts only where possible).

- The minimal request that reproduces the issue.

- The response fields that prove impact (redacted if sensitive).

- “Expected vs observed” statement in one line.

Retest protocol

- Re-run the exact request used in proof.

- Capture before/after responses and include status codes.

- Note whether the fix introduces auth breaks or unexpected behavior.

This keeps Burp outputs report-ready: consistent artifacts, strong traceability, and clean retest closure.