Phishing detection is not only about model accuracy. In real SOC operations, analysts need to understand why an email was flagged so they can decide quickly, defend decisions, and improve detection quality over time.

Random Forest works well as a strong, interpretable baseline for this problem. It is often easier to explain than deeper black-box models, while still handling mixed feature types and non-linear patterns effectively.

Interpretable Random Forest for phishing detection

Use this framework to build explainable phishing detection that supports analysts instead of replacing them.

1) Why explainability matters in phishing detection

- SOC teams need evidence-backed triage decisions, not opaque scores

- Security operations must justify blocking or quarantining messages

- False positives affect business productivity and trust in tooling

- Analysts need feature-level context to improve playbooks and user awareness

- Explainable detections are easier to tune over time

High-performing but non-interpretable models often fail operationally when teams cannot act confidently on their output.

2) Why Random Forest is a practical baseline

Random Forest is a good fit for early-to-mid maturity phishing detection programs.

Practical strengths

- Handles structured, behavioral, and linguistic features together

- Robust to noisy feature sets compared to many single-model approaches

- Provides feature importance signals for analyst interpretation

- Supports fast iteration with controlled complexity

- Works well with Python +

scikit-learnpipelines

Practical trade-offs

- Feature importance can be biased by correlated variables

- Probability outputs may require calibration for operational thresholds

- Model behavior still needs monitoring for drift and campaign shifts

3) Feature families that matter in real email triage

Feature engineering quality usually matters more than model choice in phishing use cases.

Feature-family table (required)

| Feature Family | Example Signal | Security Meaning | Analyst Use |

|---|---|---|---|

| Sender Behavior | Sudden volume spike from sender/domain | Potential compromised or spoofed sender workflow | Compare with historical sender baseline |

| Header Anomalies | Mismatch across envelope/header identity fields | Potential sender trust inconsistency | Validate sender authenticity indicators quickly |

| URL Patterns | High URL count, suspicious domain patterns, unusual redirects | Link-based phishing lure behavior | Prioritize URL detonation/sandbox checks |

| Urgency Language | “Immediate action,” “account suspended,” deadline pressure | Social pressure pattern in phishing lures | Support triage confidence for social-engineering intent |

| Brand Impersonation | Brand keywords with non-brand sender context | Likely impersonation attempt | Trigger brand abuse and takedown workflow |

| Reply-To Mismatch | Reply target differs from claimed sender identity | Potential response-hijack behavior | Escalate to spoofing and abuse review |

| Attachment Metadata | Unexpected executable/macro-like or unusual archive patterns | Potential malware delivery path | Trigger attachment sandboxing and endpoint watch |

| Lexical Features | Character-level anomalies, uncommon token distributions | Possible template-driven or obfuscated content | Compare with known campaign language fingerprints |

| Message Intent Signals | Credential request/payment update/account verification prompts | Business process abuse intent | Route to identity and finance-focused triage playbooks |

The goal is not to over-engineer features. The goal is to map features to analyst decisions.

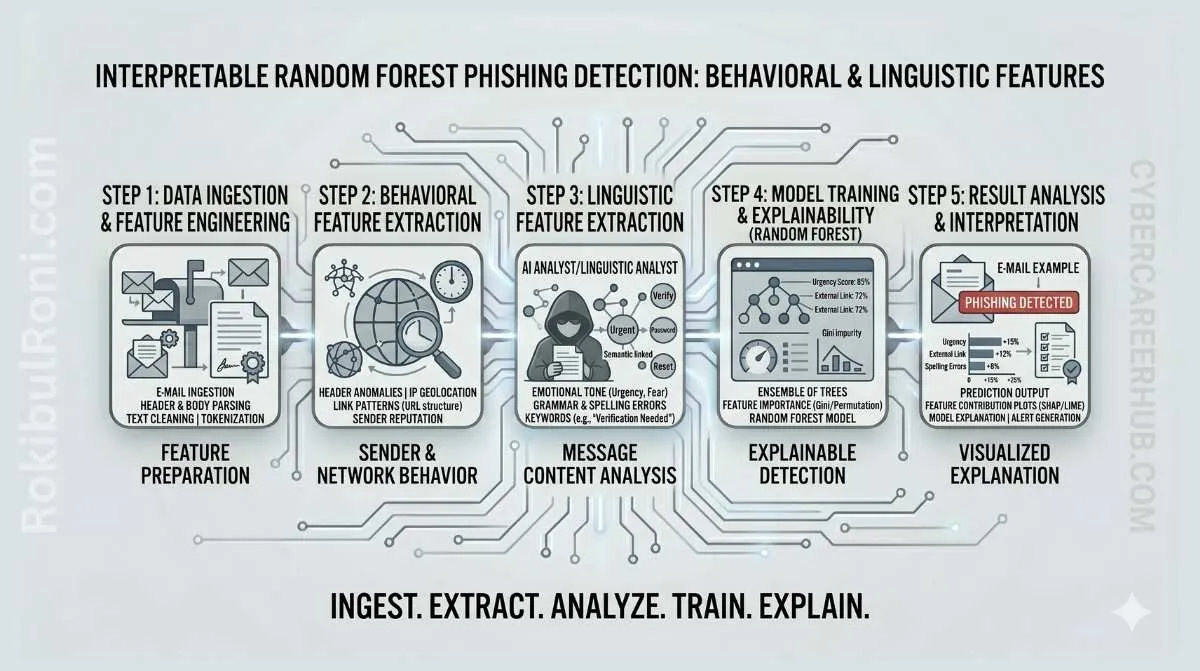

4) End-to-end workflow: data to explainable decisions

This workflow keeps model development aligned to SOC needs.

Step 1: Data preparation

- Collect labeled email samples from trusted sources

- Normalize fields (headers, body text, URLs, metadata)

- Remove or pseudonymize sensitive personal data where required

- Split data by time-aware strategy to reduce leakage risk

Step 2: Feature engineering

- Build behavioral sender features (frequency, reputation context)

- Extract linguistic features (token patterns, urgency markers)

- Add structural email features (header consistency, link/attachment stats)

- Validate feature quality and missing-value behavior

Step 3: Model training and validation

- Train Random Forest baseline with cross-validation

- Evaluate with class imbalance-aware metrics

- Compare threshold choices for operational precision/recall trade-offs

- Log model settings and experiment metadata for reproducibility

Step 4: Explainability and analyst interpretation

- Rank global feature importance for model transparency

- Generate per-message explanation summaries (top contributing signals)

- Map explanations to triage playbook actions

Step 5: Feedback loop and iteration

- Capture analyst overrides and false-positive reasons

- Retrain with drift-aware updates and campaign changes

- Re-evaluate thresholds by business function and risk appetite

5) Python tooling stack for research-to-operations

| Tool | Practical Role |

|---|---|

| Python | End-to-end pipeline scripting and integration |

| Pandas | Feature dataset prep, cleaning, and transformation |

| Scikit-learn | Random Forest training, validation, and baseline modeling |

| Jupyter | Exploratory analysis and explainability walkthroughs |

| Visualization libraries | Feature importance and confusion matrix interpretation |

| SOC integration layer (concept) | Model output handoff to triage queue/workflow |

Keep tooling simple and reproducible before adding advanced model orchestration layers.

6) Metrics that matter for phishing model operations

Accuracy alone is not sufficient for SOC deployment decisions.

| Metric | Why It Matters | Operational Interpretation |

|---|---|---|

| Precision | Measures false-positive pressure on analysts | Low precision increases alert fatigue and trust loss |

| Recall | Measures missed phishing risk | Low recall allows high-risk emails through |

| F1 Score | Balances precision/recall trade-off | Useful for baseline model comparison |

| False-Positive Rate | Direct analyst workload amplifier | Track by business unit and sender context |

| Confusion Matrix | Error pattern visibility | Helps tune thresholds and feature priorities |

| Analyst Acceptance Rate | Human trust and usability signal | Indicates whether explanations are actionable |

Threshold governance guidance

- Start with conservative threshold for high-risk inbox protection

- Tune thresholds by department sensitivity and tolerance for review load

- Review threshold outcomes weekly during initial deployment

7) Analyst-centered explainability design

Explanations should answer: “Why is this suspicious, and what should I do next?”

Practical explanation output format

| Field | Example Use |

|---|---|

| Risk Score | Prioritize triage queue ordering |

| Top 3 Contributing Signals | Explain model rationale quickly |

| Similar Historical Pattern | Provide campaign context hint |

| Confidence Band | Guide escalation urgency |

| Recommended Next Action | Link to triage playbook step |

Good explanation characteristics

- Short, consistent, and evidence-oriented

- Free of model jargon where possible

- Tied to observable message artifacts

- Mapped to concrete analyst actions

8) Converting research output into SOC workflow

Model quality matters, but operational integration determines impact.

Integration blueprint

- Deliver model output to existing case/queue system

- Attach explanation metadata with each detection

- Route high-confidence detections to faster containment path

- Collect analyst feedback tags (

true phish,benign,needs review) - Feed confirmed outcomes back into retraining pipeline

SOC handoff table

| Stage | Model Output | SOC Action |

|---|---|---|

| Pre-Triage | Risk score + explanation summary | Queue prioritization |

| Triage | Feature-driven rationale + message artifacts | Validate and classify |

| Escalation | Confirmed phish indicators + campaign linkage | Contain and notify users |

| Post-Case | Analyst decision and correction tags | Improve future model iterations |

9) Limitations you must document honestly

Avoid overclaiming model capabilities.

Core limitations

- Dataset quality and representativeness constraints

- Campaign drift and attacker adaptation over time

- Class imbalance and label noise in real SOC data

- Privacy constraints limiting some content analysis features

- Dependence on analyst review for ambiguous cases

Limitation-to-control mapping

| Limitation | Mitigation Pattern |

|---|---|

| Data drift | Scheduled retraining and drift monitoring |

| Label inconsistency | Analyst labeling guide + QA sampling |

| Privacy restrictions | Metadata-focused features and controlled content handling |

| Model overconfidence | Confidence banding + human review rules |

| Campaign novelty | Hybrid model + heuristic and TI enrichment |

10) Common implementation mistakes

- Optimizing only for offline benchmark score

- Ignoring analyst workflow and explanation usability

- Using static thresholds across all business contexts

- Skipping drift monitoring after initial deployment

- Treating model output as final truth without human validation

- Failing to version datasets, features, and model artifacts

Fast guardrails

- No deployment without explanation output

- No retraining without labeled quality checks

- No threshold change without precision/recall impact review

- No SOC rollout without defined escalation playbook

11) Research-to-SOC roadmap (practical)

Phase 1: Baseline research setup (Weeks 1–2)

- Build labeled dataset with feature schema

- Train first Random Forest baseline

- Produce initial feature-importance analysis

Output: baseline model card + metric snapshot

Phase 2: Explainability and analyst fit (Weeks 3–4)

- Design per-message explanation format

- Pilot with analyst review group

- Adjust features and thresholds based on feedback

Output: analyst-ready explanation template + tuning notes

Phase 3: Controlled SOC pilot (Weeks 5–6)

- Deploy in advisory mode (no auto-block)

- Measure acceptance, precision, and review time impact

- Compare model findings with existing email controls

Output: pilot effectiveness report

Phase 4: Operational hardening (Weeks 7–8)

- Integrate feedback loop and retraining schedule

- Define governance for threshold updates and model versions

- Expand coverage gradually by business segment

Output: production readiness decision pack

12) Maturity metrics for ongoing program health

| Metric | What It Signals | Desired Trend |

|---|---|---|

| Precision at operating threshold | Analyst workload efficiency | Up |

| Recall on validated phishing sets | Protective effectiveness | Up |

| Analyst acceptance of model decisions | Explainability quality | Up |

| Time to triage model-flagged messages | Operational efficiency | Down |

| Drift detection frequency | Environmental change awareness | Stable/Actionable |

| Retraining cycle completion rate | Program discipline | Up |

Interpretable phishing detection works best when model output is treated as analyst intelligence, not an autonomous authority: clear features, honest limitations, consistent feedback loops, and operations-first governance.

Model operations worksheet for SOC integration

| Workstream | Owner | First Action | Validation Signal |

|---|---|---|---|

| Data quality governance | Data/security analyst | Define label and feature quality checks | Lower noise and retraining instability |

| Explainability quality | Detection engineer | Standardize top-signal explanation format | Higher analyst trust and adoption |

| Threshold management | SOC lead | Calibrate thresholds by risk and workload | Better precision/recall operational balance |

| Feedback pipeline | Detection + SOC | Capture analyst overrides and reasons | Faster model improvement cycles |

Weekly operating checklist

- Review false positives with explanation context

- Validate drift indicators against recent email campaigns

- Track analyst acceptance of flagged messages

- Document threshold changes with rationale and impact

Model handoff and governance pack

| Artifact | Minimum Content | Consumer |

|---|---|---|

| Model card | Data window, features, metrics, limitations | Security leadership + analysts |

| Explainability template | Top contributors and recommended triage action | SOC analysts |

| Drift report | Feature/behavior shifts and confidence impact | Detection engineers |

| Retraining log | Version changes and outcome comparison | Governance and audit stakeholders |

Quality checks

- Are explanations actionable for real analyst workflows?

- Are model updates tied to measurable performance changes?

- Are limitations communicated clearly to decision-makers?

90-day research-to-operations cadence

Days 1–30

- Stabilize dataset schema and labeling standards

- Baseline model explanations with analyst feedback

- Establish initial model governance metrics

Days 31–60

- Tune threshold policy by business unit/use-case risk

- Improve drift monitoring and retraining triggers

- Integrate model outputs with SOC triage queue workflows

Days 61–90

- Run operational review of precision/recall and analyst acceptance

- Refine feature set from campaign behavior changes

- Publish next-cycle roadmap for model and process improvements

| KPI | Why It Matters |

|---|---|

| Analyst acceptance rate | Indicates explainability and trust quality |

| False-positive trend | Measures triage burden impact |

| Drift detection turnaround | Reflects model resilience |

| Retraining effectiveness delta | Confirms updates provide real gains |

Interpretable models become operationally valuable when data discipline, analyst usability, and governance cadence are treated as equal priorities.

Model monitoring and explainability report (what to operationalize)

If this model is going to be trusted in a real security workflow, you need two things: stable performance over time and consistent explanations that analysts can use.

Monitoring checks (monthly)

| Check | What you look for |

|---|---|

| Data drift | Feature distributions shift (new campaign language patterns) |

| Performance drift | Precision/recall changes on recent labeled samples |

| Label quality | Rising disagreement between analysts and model |

| False-positive clusters | Repeated benign templates triggering alerts |

Explainability report template (per release)

- What features are most influential overall (top 10).

- What features dominate in false positives (where to tune).

- Example explanations for 3–5 alerts (what an analyst sees).

- Known limitations (languages, short messages, formatting variants).

Governance basics

- A named owner approves model releases.

- Changes are versioned and reversible.

- The model never replaces human judgment for irreversible actions.

This is how interpretable ML stays professional in security: monitored drift, documented explanation behavior, and controlled releases.