Web attacks are no longer only an application security concern. In day-to-day operations, SOC teams see credential abuse, path probing, suspicious API behavior, and bot-driven noise before anyone opens a ticket in engineering. Detection quality determines whether these signals become useful response actions or endless alert fatigue.

This guide focuses on practical detection engineering in Splunk using safe, defensive methodology. Query examples stay high-level and pseudocode-like so teams can adapt logic without copying brittle patterns.

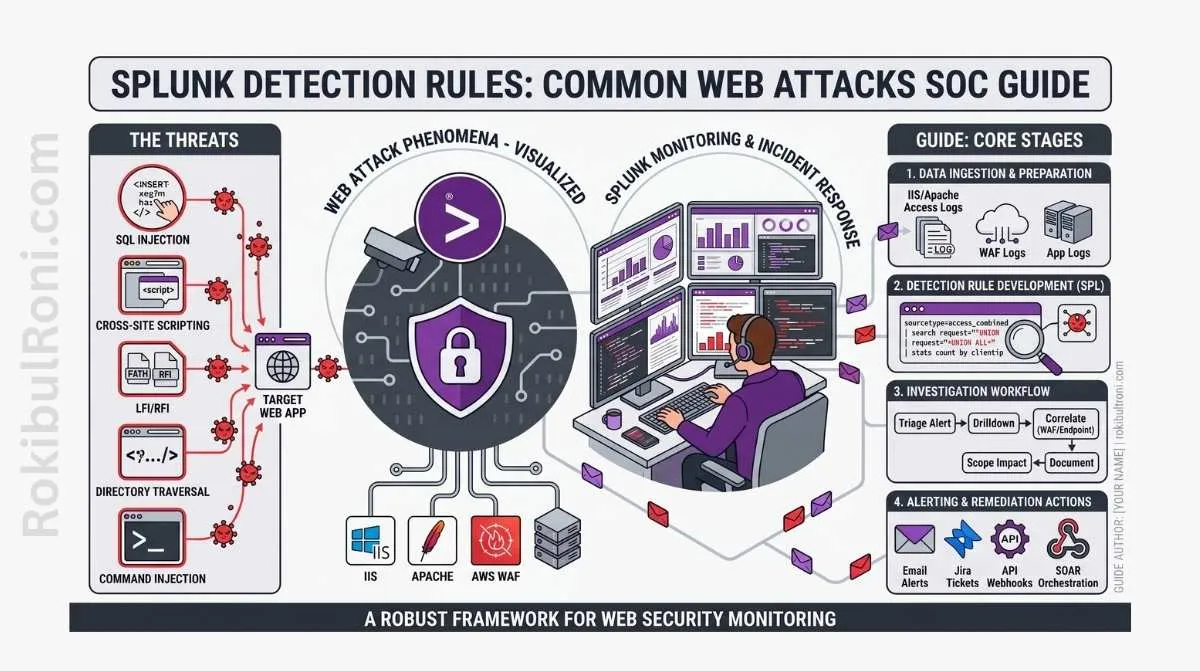

Splunk detection rules for common web attacks

Use this as a field workflow for building reliable detections that analysts can triage fast.

1) Why web attack detection belongs in the SOC

- Public apps and APIs are continuous attack surfaces, not periodic test targets.

- SOC visibility connects endpoint, identity, network, and app context in one timeline.

- Early web signal detection reduces incident scope before deeper compromise occurs.

- Detection metrics provide proof of security improvement over time.

If web telemetry is missing from SOC operations, most attack narratives remain incomplete.

2) Required log sources before writing rules

Detection rules are only as good as the telemetry pipeline behind them.

Core log sources

- Web server logs (

nginx,apache, ingress controllers) - WAF logs (managed or self-hosted)

- Application authentication logs

- Reverse proxy/load balancer logs

- API gateway logs

- Endpoint telemetry for web servers

- Firewall/network security logs

Minimum field checklist

| Log Domain | Minimum Fields Needed | Why It Matters |

|---|---|---|

| HTTP/Web | timestamp, src_ip, method, uri_path, status, user_agent | Core behavior and anomaly correlation |

| Auth | user, auth_result, src_ip, session_id, target_app | Brute force and account abuse analysis |

| WAF | rule_id, action, matched_pattern, host, uri | Defensive control context and trend quality |

| API Gateway | api_route, client_id, latency, response_code, rate_limit_signal | API abuse and performance-linked detection |

| Endpoint | host, process, network_connection, destination | Validation when web-layer events escalate |

If these fields are inconsistent, normalize first. Detection tuning before field normalization usually wastes time.

3) Detection use cases worth implementing first

Start with high-signal patterns that analysts can triage quickly.

Priority web attack detections

- SQL injection indicators in request patterns and WAF outcomes

- XSS indicators in request parameters and reflected response trends

- Path traversal-like access attempts to restricted routes

- Authentication brute-force or password spraying behavior

- Suspicious or randomized user-agent activity

- High 404 rate anomalies targeting sensitive paths

- Unusual HTTP methods for known application routes

- API abuse patterns (bursting, endpoint misuse, token anomalies)

Detection engineering table

| Use Case | Log Source | Fields Needed | Triage Question | Tuning Notes |

|---|---|---|---|---|

| SQLi Indicators | WAF + Web logs | uri, query_string, rule_id, status | Is this blocked probing or successful backend impact signal? | Add baseline by app path and expected parameter formats |

| XSS Indicators | Web + WAF + App logs | uri, param_key, status, action | Did payload reach app logic or get blocked at edge? | Suppress known safe test routes and QA traffic |

| Path Traversal Attempts | Web + Reverse proxy logs | uri_path, status, src_ip, host | Are restricted file paths being targeted repeatedly? | Threshold by source + target sensitivity |

| Auth Brute Force | Auth + WAF + Identity logs | user, auth_result, src_ip, session | Is this user lockout noise or coordinated credential attack? | Tune by tenant/user behavior baseline and MFA context |

| Suspicious User-Agent | Web logs | user_agent, src_ip, uri_path, request_rate | Is agent behavior consistent with approved scanners/monitors? | Maintain allowlist for legitimate monitoring systems |

| High 404 Recon Signal | Web + CDN logs | status, uri_path, src_ip, host | Is this normal broken-link traffic or endpoint discovery activity? | Exclude known crawler ranges where appropriate |

| Unusual HTTP Methods | Web + API gateway | method, route, status, client_id | Is method valid for this route in production behavior? | Route-method allowlist based on API specs |

| API Abuse Signal | API gateway + Auth logs | client_id, token_id, route, latency, code | Is this legitimate burst traffic or abuse pattern? | Tune per client tier and documented rate policies |

4) MITRE ATT&CK mapping for SOC context

Mapping helps detection coverage reporting and incident communication.

| Detection Theme | Example ATT&CK Tactic | Example ATT&CK Technique (High-Level) |

|---|---|---|

| Credential abuse patterns | Credential Access | Brute Force |

| Web path and endpoint probing | Discovery | Network Service Discovery / Application Discovery context |

| Web command-like input abuse signals | Initial Access / Execution context | Public-facing application abuse context |

| Data access anomaly through API | Collection | Data from Information Repositories context |

Use ATT&CK mapping as communication metadata, not as proof that an attack stage is complete.

5) Splunk rule design approach (high-level)

Good rules answer one analyst question clearly.

Rule design checklist

- Define exact behavior hypothesis

- Identify required fields and their quality status

- Set time window aligned to attack pattern speed

- Establish threshold based on baseline, not guesswork

- Define severity and escalation criteria

- Add enrichment fields (asset criticality, owner, environment)

- Attach triage playbook link in alert metadata

Pseudocode-style SPL thinking

- Filter to scoped apps and relevant status/method patterns

- Group by source + target + time bucket

- Compare current volume to baseline percentile

- Attach context (

asset_criticality,owner_team,environment) - Trigger only when threshold + context conditions are met

This keeps alert logic explainable for both SOC analysts and engineering teams.

6) Reducing false positives without losing visibility

Over-tuned suppression hides real attacks; under-tuned rules burn analyst time.

Practical tuning controls

- Dynamic thresholds by application behavior profile

- Allowlists for approved scanners and monitoring tools

- Route sensitivity weighting (admin/auth endpoints higher priority)

- Asset criticality overlays for severity adjustments

- Feedback loop with developers on expected route behavior

Tuning review cadence

| Tuning Step | Frequency | Owner |

|---|---|---|

| Alert quality review | Weekly | SOC detection engineer |

| False-positive root cause analysis | Weekly | SOC + AppSec |

| Allowlist and baseline refresh | Bi-weekly | SOC platform owner |

| Rule logic and threshold review | Monthly | Detection engineering lead |

| Coverage gap assessment | Quarterly | SOC manager + security architecture |

7) Dashboard ideas that support real triage

Dashboards should drive action, not vanity metrics.

Useful SOC dashboard widgets

- Top attacked endpoints by count and trend

- HTTP status code spikes by application/environment

- Authentication failure heatmap by user/source

- WAF block vs allow trend by rule category

- Suspicious parameter pattern frequency

- Source geography and ASN clustering for attack campaigns

- API route abuse rate by client identity

Pair each dashboard widget with an associated triage question so analysts know what to do next.

8) Incident response handoff model

A good alert is only complete when IR can act on it quickly.

Handoff packet contents

- Incident summary in one sentence

- Detection rule name and trigger rationale

- Timeline with key timestamps

- Affected endpoint(s), host(s), and environment

- Source context (

src_ip, user/session/client identity) - Related WAF/auth/endpoint correlations

- Suggested containment options (high-level)

- Open questions requiring engineering input

Handoff quality table

| Handoff Item | Good Example | Weak Example |

|---|---|---|

| Timeline | Ordered events with exact UTC timestamps | “Several events happened today” |

| Affected Scope | Specific endpoint + host + environment | “Web app might be affected” |

| Evidence | Linked log excerpts with identifiers | Screenshot without query context |

| Containment Advice | Disable token / block source / protect route (as appropriate) | “Please investigate” only |

9) Common detection engineering mistakes

- Ingesting logs without parsing and field normalization

- Alerting on every noisy pattern with static thresholds

- Ignoring API gateway telemetry while monitoring only web servers

- Building detections without ownership metadata

- Treating WAF block counts as complete attack visibility

- Skipping post-incident tuning after false positives or misses

- Not documenting why a rule exists and what question it answers

10) Practical 30-day web detection improvement plan

| Week | Focus | Output |

|---|---|---|

| Week 1 | Validate telemetry and field normalization | Field quality report + missing source list |

| Week 2 | Deploy baseline high-signal use cases | Initial rule set for auth abuse, 404 anomalies, method misuse |

| Week 3 | Triage-driven tuning and enrichment | Reduced false positives + owner/criticality context |

| Week 4 | IR handoff hardening and dashboard rollout | Standard handoff template + SOC web detection dashboard |

Metrics to track through the 30 days

- Alert volume vs actionable alert ratio

- False-positive rate per rule

- Mean time to triage web alerts

- Incident conversion rate from detections

- Coverage of critical apps and API routes

A SOC that treats web detection as an engineering discipline, not a one-time query sprint, gets faster triage, better containment, and stronger collaboration with application teams.

Detection operations worksheet for SOC teams

| Workstream | Owner | First Action | Validation Signal |

|---|---|---|---|

| Data quality | SIEM engineer | Validate required fields by log source | Reduced null/parse failure rate |

| Use-case ownership | Detection lead | Assign owner to each detection use case | Clear escalation point for tuning updates |

| Triage readiness | SOC lead | Add triage questions to alert metadata | Faster analyst decision consistency |

| Tuning governance | Detection engineer | Schedule weekly false-positive review | Alert quality improves without blind spots |

SOC execution checklist

- Ensure every rule answers one clear investigative question

- Avoid deploying high-noise rules without baseline references

- Track suppression changes with owner and expiration

- Validate detection behavior after major app changes

Handoff package standard for incident teams

| Artifact | Minimum Content | Consumer |

|---|---|---|

| Alert context pack | Rule name, trigger logic summary, key fields | Tier-1/Tier-2 analysts |

| Correlation snapshot | Related auth/WAF/endpoint events | Incident responders |

| Scope summary | Affected app/route/session context | App owners + response team |

| Containment options | High-level recommended response actions | Incident commander |

Quality gates

- Can an analyst decide escalation from alert content alone?

- Are correlated signals sufficient to reduce false escalation?

- Is affected scope specific enough for engineering response?

90-day detection engineering cadence

Days 1–30

- Normalize critical web/app/API log fields

- Launch baseline high-signal web detections

- Create triage runbook snippets per rule category

Days 31–60

- Tune thresholds with asset context and app-owner feedback

- Add dashboard KPIs for alert quality and triage speed

- Reduce recurring false positives by pattern class

Days 61–90

- Expand coverage to additional business-critical endpoints

- Audit rule ownership and stale detection logic

- Publish quarterly detection maturity report

| KPI | Why It Matters |

|---|---|

| Actionable alert ratio | Core measure of detection usefulness |

| Mean time to triage | Reflects SOC operational efficiency |

| False-positive rate by rule | Shows tuning quality and rule health |

| Incident conversion from detections | Measures practical security value |

Detection programs scale best when telemetry quality, rule ownership, and triage execution are managed as one operational system.

Detection engineering lifecycle (Splunk) without the chaos

Rules stay effective when they have an owner, a test method, and a controlled release process.

Rule “definition of done”

| Item | Minimum standard |

|---|---|

| Purpose | Clear threat/problem statement |

| Data sources | Required indexes/sourcetypes listed |

| Triage steps | 3–5 deterministic checks an analyst can follow |

| False-positive controls | Filters/suppressions documented with rationale |

| Owner | Named team/person responsible for tuning |

| Test data | Sample events or replay method documented |

Testing approach (practical)

- Unit test: query returns expected fields and does not error.

- Signal test: rule fires on known-bad simulated events or replayed incidents.

- Noise test: run against a typical week and record baseline alert volume.

Release controls

- Promote rules through

dev → stage → prodwith a consistent checklist. - Time-box high-risk changes and have rollback ready.

- Keep a changelog: what changed, why, and what metric improved.

Metrics that actually help

| Metric | Use |

|---|---|

| Alerts/day per rule | Identifies noisy or failing logic |

| True-positive rate | Validates detection value |

| Median triage time | Shows operational workload |

| Suppression count | Flags drift and environment changes |

This keeps Splunk detections professional-grade: tested, owned, and measurable over time.