Small teams do not fail incident response because they lack enterprise tooling. They fail because responsibilities are unclear, communication is delayed, and evidence is lost during the first high-pressure hour.

A written playbook solves that. It turns panic into sequence: who leads, what gets contained, what gets preserved, who gets informed, and how recovery is verified.

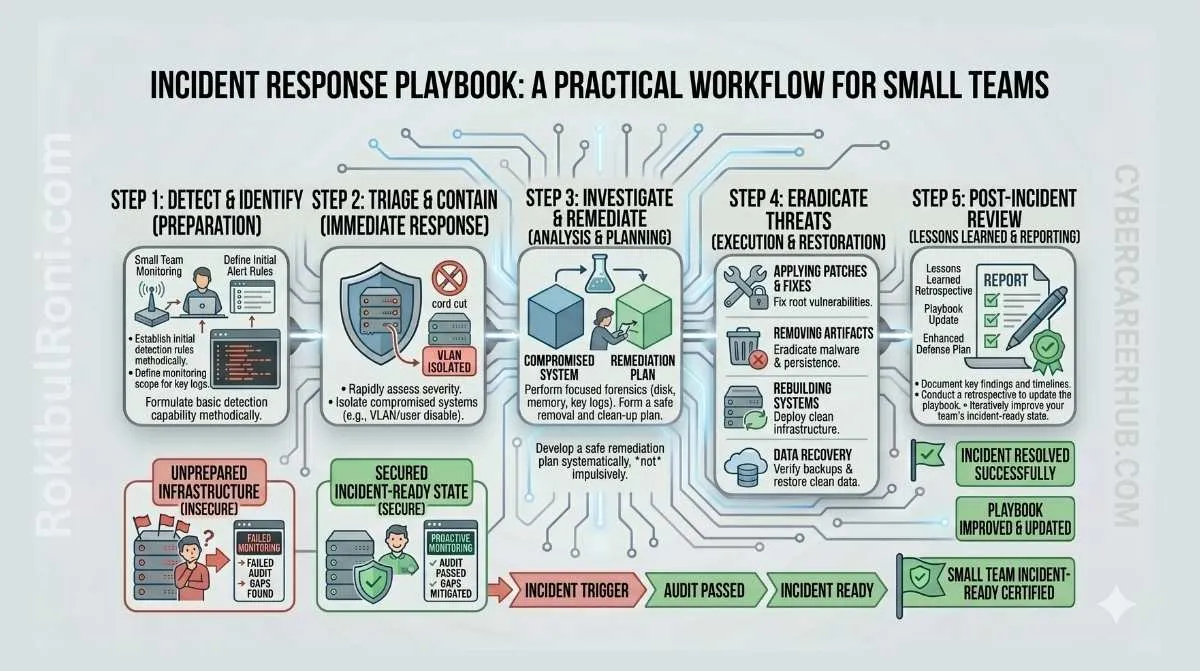

Incident response playbook for small teams

Use this as a minimum viable response system for lean IT and security teams.

1) Why small teams need a written IR playbook

- Incidents escalate faster than ad-hoc decision making

- Staff overlap creates role confusion without pre-assigned ownership

- Evidence quality drops when actions are improvised

- Communication mistakes create legal, trust, and operational damage

- Recovery is slower when priorities are not predefined

A short, usable playbook is better than a perfect document no one uses.

2) Minimum viable incident response plan

If capacity is limited, start with the essentials that directly affect containment and recovery.

Core plan components

- Incident definition and trigger criteria

- Severity classification and escalation thresholds

- Role assignments with backup owners

- Contact matrix (internal and external)

- Evidence handling and storage process

- Containment decision flow

- Recovery validation checklist

- Post-incident review requirements

Minimum viable IR artifacts

| Artifact | Purpose | Owner |

|---|---|---|

| One-page response flow | Rapid action sequence under pressure | Incident lead |

| Severity matrix | Consistent triage and escalation decisions | Security/IT owner |

| Contact matrix | Fast communication routing | Operations manager |

| Evidence log template | Chain-of-custody and traceability | Technical responder |

| Executive update template | Consistent leadership communication | Incident lead |

3) Roles and responsibilities for lean teams

Small organizations may not have dedicated people for each role. Assign roles anyway, then map one person to multiple roles if needed.

| Role | Primary Responsibility | Backup Responsibility |

|---|---|---|

| Incident Lead | Owns timeline, decisions, and coordination | Acts as executive update owner |

| IT Owner | Infrastructure containment and recovery execution | Supports evidence collection logistics |

| Executive Contact | Business decision authority and prioritization | Approves operational risk tradeoffs |

| Legal/Privacy Contact | Regulatory/privacy obligations and disclosure guidance | Reviews external communications |

| Communications Owner | Internal and external messaging consistency | Customer/stakeholder update management |

| Vendor Contact | Third-party support escalation (cloud, ISP, MSSP) | Incident technical context relay |

RACI-style action map

| Action | Incident Lead | IT Owner | Executive | Legal/Privacy | Communications | Vendor Contact |

|---|---|---|---|---|---|---|

| Severity declaration | A | C | I | I | I | I |

| Host isolation decision | A | R | C | I | I | C |

| Evidence preservation | C | R | I | C | I | I |

| Breach disclosure decision | C | I | A | R | R | I |

| Recovery go-live approval | C | R | A | C | I | C |

R = Responsible, A = Accountable, C = Consulted, I = Informed

4) Preparation checklist before any incident happens

Preparation is where small teams win. Most response failures are preparation failures.

Readiness checklist

- Current asset inventory (servers, endpoints, SaaS, cloud workloads)

- Backup coverage with restore test records

- MFA enabled for privileged and remote access accounts

- Logging enabled on critical systems and identity providers

- Endpoint protection installed and reporting to central console

- Password reset and forced credential rotation process documented

- Vendor escalation contacts validated quarterly

- Cyber insurance contacts and policy constraints documented (if applicable)

- Secure evidence storage location defined and access-controlled

Preparation table

| Control Area | Minimum Standard | Validation Method |

|---|---|---|

| Asset Inventory | Critical assets and owners documented | Monthly ownership review |

| Backups | Daily backups + periodic restore test | Restore drill evidence |

| Identity Security | MFA on admin and high-risk users | IAM policy audit |

| Logging | Auth, endpoint, firewall, cloud audit logs retained | SIEM/log platform check |

| Endpoint Protection | Coverage on all production endpoints | Agent inventory report |

| Evidence Storage | Encrypted, access-controlled repository | Access review + test upload |

5) Incident phases with practical actions

Use this sequence for operational consistency.

Phase 1: Prepare

- Confirm playbook owners and on-call structure

- Validate logging health and alerting pathways

- Run tabletop exercises for top scenarios

Phase 2: Identify

- Gather alert context and affected scope

- Assign severity using predefined matrix

- Open formal incident record and timeline

Phase 3: Contain

- Isolate affected hosts/accounts/services as needed

- Prevent lateral spread and preserve critical business services

- Document every containment action with timestamp

Phase 4: Preserve evidence

- Capture volatile and persistent evidence where possible

- Protect originals; work from copies for analysis

- Record chain-of-custody for each artifact

Phase 5: Eradicate

- Remove malicious artifacts or unauthorized persistence

- Patch exploited weaknesses and rotate impacted credentials

- Validate cleanup through technical checks

Phase 6: Recover

- Restore systems in prioritized order

- Monitor closely for recurrence indicators

- Validate service integrity before full business reopen

Phase 7: Communicate

- Provide structured updates by stakeholder type

- Keep statements factual and timestamped

- Coordinate legal/privacy review for external messaging

Phase 8: Learn

- Run post-incident review within defined window

- Document root causes and control gaps

- Assign tracked remediation actions with owners and due dates

6) Ransomware readiness for small teams (safe high-level)

Ransomware response depends on preparation and containment speed.

Practical readiness controls

- Offline/immutable backup strategy for critical systems

- Segmentation between user endpoints and critical servers

- Fast privileged account disablement process

- Known-good recovery images and rebuild playbooks

- Contact plan for legal, insurer, and incident support partners

Ransomware triage priorities

- Stop spread (isolate affected assets)

- Preserve evidence before widespread rebuilding

- Confirm scope (what is encrypted, what is exposed, what is still clean)

- Protect backups and recovery infrastructure

- Align legal/communications response quickly

7) Contact matrix template (required)

| Contact Type | Name/Role | Primary Channel | Backup Channel | Availability | Escalation Trigger |

|---|---|---|---|---|---|

| Incident Lead | 24/7 or business hours | Severity High/Critical | |||

| IT Operations | Host/service containment required | ||||

| Executive Sponsor | Business-impacting outage/data risk | ||||

| Legal/Privacy | Possible regulated data impact | ||||

| Communications | Internal or customer statement needed | ||||

| Cloud Provider/MSSP | Platform-level escalation required | ||||

| Backup/DR Vendor | Restore workflow initiated |

Keep this matrix current and test contact reachability quarterly.

8) Severity table for small teams (required)

Use simple levels with clear action triggers.

| Severity | Typical Criteria | Required Response Time | Escalation |

|---|---|---|---|

| Low | Isolated suspicious activity with no confirmed impact | Same business day | IT owner informed |

| Medium | Confirmed compromise on limited asset scope | Within 4 hours | Incident lead + IT owner active |

| High | Multi-system impact, credential abuse, or business disruption | Within 1 hour | Executive + legal/privacy notified |

| Critical | Widespread outage, likely data breach, or core business interruption | Immediate | Full incident team activation |

Severity decision guardrails

- Default upward when uncertainty affects high-value systems

- Reclassify as new evidence arrives

- Document reasons for every severity change

9) Tooling stack for practical small-team IR

Tools should support workflow, not replace it.

| Tool / Platform | Practical IR Use |

|---|---|

| Wireshark | Packet-level triage and suspicious flow validation |

| Autopsy | Disk and artifact analysis for forensic review |

| Wazuh | Endpoint and security event monitoring |

| Splunk / ELK (concept) | Centralized log correlation and timeline construction |

| Backup systems | Verified recovery and rollback operations |

| Ticketing platform | Action tracking, ownership, and audit trail |

| Secure documentation workspace | Timeline, decisions, and evidence index management |

Tooling principles

- Standardize formats for evidence and timeline entries

- Prefer integrations that reduce manual copy/paste errors

- Keep one source of truth for incident status

10) Common mistakes that hurt small-team response

- Backups exist but restores were never tested

- No communication plan for executives, staff, or customers

- Panic-driven changes made without timeline logging

- Evidence overwritten during rushed cleanup

- Decision authority unclear during high-severity events

- Incident closed without corrective-action ownership

Practical prevention controls

- Run restore drills quarterly and record outcomes

- Pre-approve messaging templates by stakeholder type

- Enforce incident timeline note-taking from first alert

- Gate cleanup actions behind evidence-preservation check

- Document accountable owner for each post-incident action

11) 30-day IR readiness plan for lean teams

Week 1: Build the minimum operating kit

- Assign roles and backups

- Publish severity matrix and one-page response flow

- Build first version of contact matrix

Output: version 1 IR playbook package

Week 2: Validate telemetry and evidence process

- Confirm logging coverage for critical assets

- Test evidence storage and chain-of-custody template

- Validate endpoint and network visibility paths

Output: telemetry + evidence readiness report

Week 3: Run scenario tabletop and fix gaps

- Simulate one ransomware-like and one credential-abuse scenario

- Measure time to role activation and escalation

- Update playbook with discovered friction points

Output: tabletop findings and playbook revision log

Week 4: Execute technical drills and leadership reporting

- Run backup restore drill for critical workload

- Test emergency contact reachability

- Deliver readiness summary and next-quarter roadmap

Output: signed 30-day readiness status report

12) Practical templates to keep with the playbook

Incident timeline template

| Time (UTC) | Event | Actor | System/Asset | Action Taken | Evidence Ref | Decision Owner |

|---|

Executive update template

| Section | Content Prompt |

|---|---|

| Current status | What is confirmed right now? |

| Business impact | Which services/users are affected? |

| Actions underway | What containment/recovery steps are active? |

| Next milestone | What decision or validation is next? |

| Support needed | What approvals/resources are required? |

Post-incident action tracker

| Action | Owner | Priority | Due Date | Status | Validation Method |

|---|

Small teams do not need an enterprise-sized program to respond well. They need clarity, repetition, and ownership: a practical playbook, trained roles, tested recovery paths, and disciplined follow-through after every incident.

IR operations worksheet for small teams

| Workstream | Owner | First Action | Validation Signal |

|---|---|---|---|

| Role clarity | Incident lead | Confirm primary + backup assignments | Faster activation during incidents |

| Evidence discipline | Technical responder | Standardize evidence log and custody form | Better post-incident audit confidence |

| Communication flow | Comms owner | Pre-approve stakeholder update templates | Reduced confusion during escalation |

| Recovery readiness | IT owner | Test restore path for critical systems | Measurable recovery-time improvement |

Weekly execution checklist

- Verify contact matrix accuracy and reachability

- Check backup health and restore test scheduling

- Review open incident action items and ownership

- Update severity model based on recent cases

Case handoff and closure package

| Artifact | Minimum Content | Consumer |

|---|---|---|

| Incident timeline | UTC events, actors, decisions, actions | Leadership + audit teams |

| Technical evidence pack | Logs, captures, system artifacts references | Security + responders |

| Communication log | Internal/external messages and approvals | Legal/privacy/comms |

| Corrective action tracker | Owner, due date, validation method | Operations + management |

Closure quality checks

- Were all critical decisions timestamped and owned?

- Did containment actions preserve enough evidence for review?

- Are corrective actions assigned and scheduled for validation?

90-day small-team IR hardening cadence

Days 1–30

- Finalize one-page activation flow and role matrix

- Run one tabletop on credential abuse scenario

- Validate evidence handling and storage workflow

Days 31–60

- Run restore and recovery drills for key business systems

- Improve communication templates with legal/comms input

- Track mean response and containment timing

Days 61–90

- Execute second tabletop on ransomware-style disruption scenario

- Audit corrective action completion from prior incidents/drills

- Publish quarterly IR readiness report with next-step priorities

| KPI | Why It Matters |

|---|---|

| Time to team activation | Shows readiness under pressure |

| Time to containment | Indicates operational response efficiency |

| Recovery validation success rate | Measures business continuity reliability |

| Corrective action closure rate | Confirms sustained program improvement |

Small-team incident response becomes resilient when preparation, evidence handling, communication, and recovery testing are maintained as a continuous operating rhythm.

Readiness drill package (small-team friendly)

The biggest improvement lever for a small team is repetition with lightweight documentation.

Monthly tabletop (60 minutes)

- Pick one scenario (phishing → mailbox takeover, ransomware alert, suspicious outbound traffic).

- Walk through: detection → triage → containment → communication → recovery.

- Capture gaps as action items with owners and deadlines.

Incident communication templates (keep them short)

| Template | Used for | Must include |

|---|---|---|

| Initial notice | “We’re investigating” update | Impact, owners, next update time |

| Containment notice | “We blocked/isolated” update | What changed, risk, rollback notes |

| Closure summary | “Resolved” update | Root cause, fixes, follow-ups |

Post-incident review checklist

- What signal worked and what was missed?

- Which access controls failed (or were absent)?

- Which step took too long and why (permissions, tooling, communication)?

- What would prevent recurrence (control, process, training)?

Metrics that keep you honest

| Metric | Why |

|---|---|

| Time to acknowledge | Measures detection/triage responsiveness |

| Time to contain | Measures operational capability |

| Evidence completeness | Ensures decisions are traceable |

| Follow-up closure rate | Ensures lessons actually ship |

This keeps a small-team IR playbook professional: practiced regularly, communicated clearly, and improved with measurable actions.