A personal cybersecurity lab is the fastest way to turn learning into evidence. It gives you a place to test ideas safely, write real reports, and build repeatable workflows across offensive and defensive security.

The goal is not to build the biggest lab. The goal is to build a controlled lab that is legal, stable, and useful for long-term skill growth.

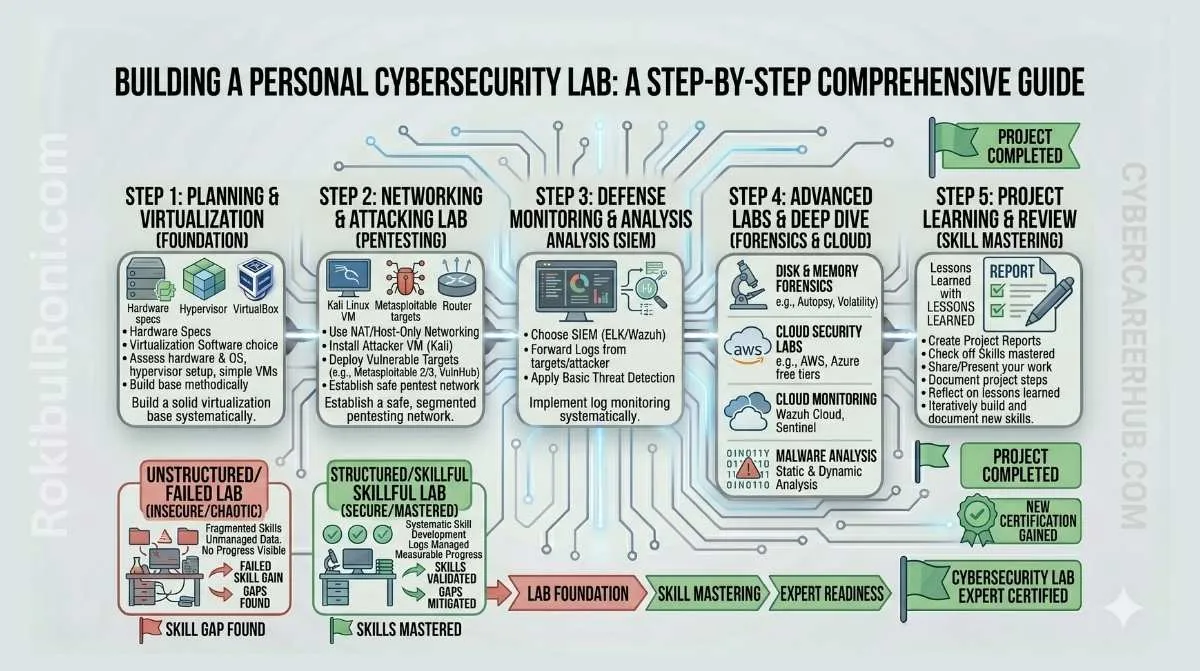

Building a personal cybersecurity lab

Use this framework to create a safe, practical lab for pentesting, SIEM, forensics, and cloud security practice.

1) Why a personal lab matters more than passive learning

- Converts theory into repeatable technical skills

- Builds portfolio artifacts employers can evaluate

- Improves troubleshooting confidence across tools and systems

- Creates a safe environment for experimentation and failure

- Trains reporting, documentation, and remediation thinking

A lab turns “I watched a tutorial” into “I can execute and explain the workflow.”

2) Lab safety rules you should enforce from day one

Safety is non-negotiable. Most beginner lab problems come from weak boundaries, not advanced attacks.

Core lab safety rules

- Use isolated lab networks, separate from daily personal/work systems

- Test only on systems you own or are explicitly authorized to use

- Never target live third-party systems from lab activities

- Use VM/container snapshots before major testing changes

- Keep backups of important configs, scripts, and notes

- Apply resource limits so lab experiments do not destabilize host systems

Safety baseline table

| Rule | Why It Matters | Practical Check |

|---|---|---|

| Isolated networking | Prevents accidental spillover to real environments | Verify lab subnet and routing boundaries |

| Owned/authorized targets only | Avoids legal and ethical violations | Maintain target ownership list |

| Snapshot-first workflow | Enables safe rollback after mistakes | Snapshot before each scenario change |

| Backup discipline | Prevents loss of lab build and evidence | Weekly backup verification |

| Resource controls | Prevents host overload and service crashes | Set CPU/memory limits per workload |

3) Lab architecture tracks (modular design)

Build your lab as separate tracks so learning remains focused and safe.

Track A: Pentesting lab

Core components

- Kali/Parrot-style attacker VM

- OWASP Juice Shop / DVWA-style vulnerable web targets

- Metasploitable-style training hosts in isolated segment

- Burp Suite + Nmap toolchain

Learning objectives

- Recon and scoped enumeration

- Web/API validation workflows

- Safe evidence capture and reporting

Track B: SIEM lab

Core components

- Wazuh or ELK (or Splunk free/dev concept)

- Windows/Linux log-forwarding endpoints

- Central log storage and dashboarding

Learning objectives

- Detection rule building and tuning

- Alert triage and false-positive reduction

- Incident timeline creation

Track C: Network lab

Core components

- Wireshark analysis node

- SNORT/Suricata IDS sensor (optional progression)

- Sample PCAP repository

Learning objectives

- Packet triage workflow

- Protocol-level anomaly review

- Network evidence reporting

Track D: Forensics lab

Core components

- Autopsy workstation

- Known-safe disk images and artifact datasets

- Timeline and browser artifact practice materials

Learning objectives

- Chain-of-custody mindset

- Artifact extraction and timeline reconstruction

- Investigation summary writing

Track E: Cloud lab

Core components

- AWS/GCP free-tier learning projects (safe baseline focus)

- IAM baseline checks and logging validation

- Cloudflare-style public app edge control experiments

Learning objectives

- Identity and permission hardening

- Cloud logging and visibility controls

- Public exposure risk reduction

4) Hardware and software options by budget/complexity

Start small and scale only when your workflow justifies it.

| Option | Best For | Pros | Constraints |

|---|---|---|---|

| Single laptop | Beginners and students | Low cost, simple setup | Limited CPU/RAM for multi-track labs |

| Mini PC + laptop | Intermediate learners | Better isolation and always-on lab options | Extra hardware management |

| Cloud VM-assisted hybrid | Cloud/security focus and remote access practice | Flexible scaling, cloud-native experience | Ongoing cost and access control needs |

| Full homelab server | Advanced multi-service simulations | High capacity and persistent environment | Power, complexity, and maintenance overhead |

Software stack options

- VirtualBox / VMware for VM segmentation

- Docker and Docker Compose for fast service labs

- Git/GitHub for versioning configs and scripts

- Markdown-based notes for case-study style documentation

5) Practical lab architecture table (required)

| Lab Segment | Purpose | Example Components | Isolation Boundary | Typical Output |

|---|---|---|---|---|

| Pentest Segment | Web/API and host security testing practice | Kali + Juice Shop + DVWA + Burp | Isolated attack subnet | Findings report with evidence |

| SIEM Segment | Detection and monitoring practice | Wazuh/ELK + log sources | Internal logging network | Detection rule + triage notes |

| Network Segment | Packet analysis and IDS fundamentals | Wireshark + sample PCAP + IDS sensor | Controlled capture zone | Traffic analysis summary |

| Forensics Segment | Artifact and timeline analysis | Autopsy + disk images | Read-only evidence workflow | Case timeline and artifact report |

| Cloud Segment | IAM/logging/baseline hardening | AWS/GCP sandbox + Cloudflare controls concept | Separate cloud project/accounts | Baseline checklist and gap register |

Use this table as your lab design blueprint before deploying tools.

6) How to connect tracks without breaking containment

Cross-track integration creates realistic workflows, but only if boundaries stay controlled.

Safe integration patterns

- Forward logs from pentest targets into SIEM segment

- Export PCAP snapshots from network segment for forensics review

- Feed cloud audit events into centralized log analysis workflow

- Keep management interfaces reachable only from admin workstation

Boundary controls

- Use separate subnets/networks per track

- Restrict bridge points to documented ports and protocols

- Remove temporary bridge rules after exercises

- Validate no route exists from lab to unauthorized external targets

7) Project ideas for portfolio-ready outputs

Each lab project should create a visible artifact.

| Project Idea | Track | Portfolio Artifact |

|---|---|---|

| API authorization review in test app | Pentest | Structured pentest report + remediation notes |

| Detection tuning for web attack patterns | SIEM | Rule tuning changelog + dashboard screenshot pack |

| Suspicious outbound traffic triage | Network + SIEM | Packet + log correlation case note |

| Browser artifact timeline reconstruction | Forensics | Investigation report with timeline table |

| Cloud IAM baseline review | Cloud | Baseline checklist + prioritized remediation plan |

| End-to-end mini incident simulation | Multi-track | Incident timeline + containment and lessons learned |

Portfolio quality grows when projects include method, evidence, and reflection.

8) Common mistakes in personal lab building

- Unsafe networking that exposes vulnerable services externally

- Deploying too many tools before mastering fundamentals

- Weak documentation and no repeatable runbooks

- No cleanup strategy, leading to unstable lab state

- Ignoring report writing after technical exercises

- Mixing sensitive personal data into lab workflows

Fast guardrails

- One new major tool per month maximum

- One complete writeup per project

- One cleanup and snapshot review cycle per week

- One architecture diagram update per major lab change

9) Documentation and operational discipline

A lab becomes powerful when it is reproducible and explainable.

Minimum documentation set

- Lab architecture diagram (updated regularly)

- Asset and target inventory

- Network map with boundaries and bridge points

- Standard runbook: start, stop, reset, backup, restore

- Evidence template for findings and incident notes

- Lessons-learned log after each project

Operational checklist

| Task | Frequency | Owner |

|---|---|---|

| Snapshot critical systems | Before major changes | You |

| Backup configs and notes | Weekly | You |

| Review exposed services | Weekly | You |

| Prune stale containers/VMs | Weekly/Bi-weekly | You |

| Validate restore process | Monthly | You |

| Update architecture docs | Monthly or after major changes | You |

10) 4-week beginner starter lab roadmap (required)

Week 1: Foundation and safe setup

- Build base virtualization/container host

- Create isolated network segments for at least two tracks

- Deploy first vulnerable web target and one logging endpoint

Output: baseline architecture diagram + setup checklist

Week 2: Add SIEM and visibility

- Deploy SIEM stack (or lightweight equivalent)

- Ingest logs from web target and host systems

- Create first dashboard and basic alert rule

Output: log ingestion validation + first detection artifact

Week 3: Practice workflow execution

- Run one scoped pentest exercise on lab target

- Capture evidence and produce short report

- Correlate at least one event in SIEM timeline

Output: mini pentest report + correlation note

Week 4: Forensics and cloud baseline extension

- Perform one artifact/timeline exercise in forensics track

- Create simple cloud baseline checklist in sandbox project

- Document full lab runbook and cleanup process

Output: end-of-month lab playbook + portfolio artifact bundle

11) Maturity levels for personal lab growth

| Maturity Level | Characteristics | Next Goal |

|---|---|---|

| Level 1: Starter | One or two tracks, basic isolation, manual workflows | Stabilize and document repeatable operations |

| Level 2: Structured | Multi-track with logging and evidence discipline | Improve automation and detection tuning |

| Level 3: Integrated | Cross-track correlation and incident simulation | Build role-specific specialization projects |

| Level 4: Portfolio-Ready | Consistent case studies with professional reporting quality | Align artifacts to target career role |

Progress comes from consistency, not complexity.

A personal cybersecurity lab becomes career-changing when it stays safe, modular, and documented: ethical boundaries first, reproducible workflows second, and portfolio-quality outputs every month.

Lab operations worksheet for long-term growth

| Workstream | Owner | First Action | Validation Signal |

|---|---|---|---|

| Safety governance | You | Maintain isolation and target-ownership register | Zero unauthorized testing behavior |

| Track integration | You | Define controlled bridges between lab segments | Better cross-track investigation capability |

| Documentation quality | You | Update runbooks and diagrams after each major change | Faster rebuild and onboarding consistency |

| Portfolio output | You | Convert each lab project into publishable artifact | Career evidence grows steadily |

Weekly operations checklist

- Verify exposed services and network boundaries

- Snapshot critical systems before major lab changes

- Back up configs, scripts, and notes

- Close stale tasks from prior lab exercises

Lab artifact and handoff pack

| Artifact | Minimum Content | Consumer |

|---|---|---|

| Architecture doc | Segments, hosts, tools, trust boundaries | You/collaborators |

| Scenario workbook | Objective, setup, execution, evidence | Portfolio and learning review |

| Incident/finding report | Timeline, impact, remediation, retest notes | Career evidence set |

| Improvement backlog | Known lab gaps with priority and owner | Next-cycle planning |

Quality checks

- Can you rerun a scenario from documentation only?

- Are evidence artifacts clear enough for third-party review?

- Are lessons learned converted into concrete lab improvements?

90-day lab capability cadence

Days 1–30

- Harden baseline safety controls across all active tracks

- Complete one end-to-end scenario with documented outputs

- Publish architecture and runbook v1

Days 31–60

- Add cross-track correlation (pentest signal to SIEM to forensics)

- Improve repeatability through templates and automation

- Produce one portfolio-ready case study

Days 61–90

- Run mini incident simulation using multiple tracks

- Measure response/reporting quality and refine workflows

- Publish next-quarter lab roadmap by specialization goal

| KPI | Why It Matters |

|---|---|

| Reproducible scenario rate | Indicates documentation and setup quality |

| Time to rebuild lab state | Operational resilience measure |

| Portfolio artifact completion rate | Tracks career-output consistency |

| Safety incident count | Confirms containment discipline |

A personal lab reaches advanced maturity when safety, reproducibility, and evidence quality are maintained as continuous habits rather than occasional cleanup tasks.

Lab operations (maintenance, safety, and reproducibility)

The difference between a “fun lab” and a professional lab is operational discipline: you can rebuild it, explain it, and keep it safe.

Maintenance cadence

| Task | Cadence | Output |

|---|---|---|

| Patch host OS + hypervisor | Monthly | Change note + reboot window |

| Update tools/images | Monthly | Version snapshot |

| Review exposed services | Weekly | “No unintended exposure” check |

| Backup critical configs | Monthly | Restorable backup archive |

Safety checklist

- Keep the lab segmented from personal devices.

- Avoid exposing lab services to the public internet.

- Use test accounts and synthetic data.

- Document what’s running and why (reduce forgotten services).

Reproducibility standards

- Maintain a single “lab inventory” page: VMs/containers, roles, IP ranges, purpose.

- Store configs and notes in version control.

- Write short runbooks: start/stop, reset, and restore procedures.

This keeps the lab article professional and relevant: predictable maintenance, safe defaults, and evidence that you can reproduce work like a real engagement.