An IDS that alerts on everything teaches analysts to ignore everything. SNORT tuning is not about silencing alerts. It is about increasing signal quality so the SOC can detect meaningful threats without drowning in repetitive noise.

Good tuning balances two goals that often conflict: reduce false positives and preserve visibility on high-risk activity.

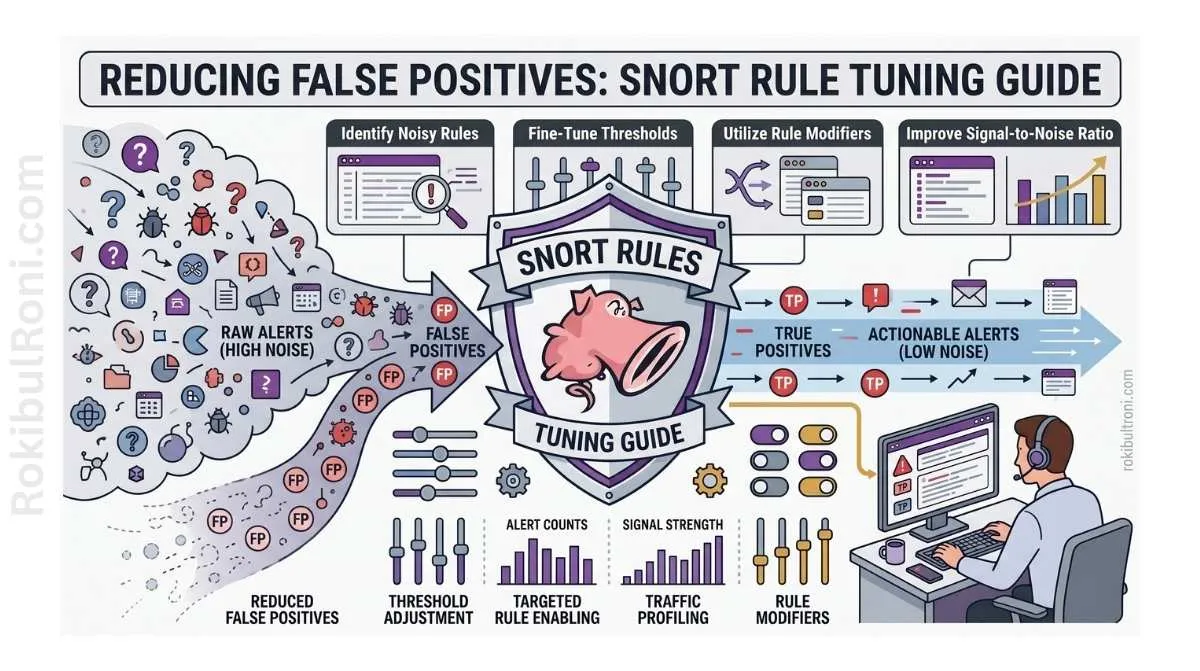

SNORT rule tuning guide

Use this workflow to tune SNORT responsibly across enterprise and lab networks.

1) Why IDS tuning matters

- Reduces analyst fatigue from low-value alert storms

- Improves triage speed for true security events

- Increases confidence in escalation decisions

- Preserves visibility where it matters most

- Makes detection engineering measurable and repeatable

Without tuning, even strong IDS signatures become operationally weak.

2) Noise reduction vs visibility loss

Disabling noisy alerts can solve short-term pain but create long-term blind spots.

| Tuning Choice | Short-Term Effect | Long-Term Risk | Better Alternative |

|---|---|---|---|

| Disable entire rule category | Immediate alert drop | Loss of attack-surface coverage | Threshold tuning + context filtering |

| Broad suppression by source range | Fewer repeated alerts | Masks compromised internal hosts | Narrow suppression with expiration |

| Ignore low severity globally | Reduces queue size | Misses chained low-to-high kill paths | Use risk-based correlation and escalation rules |

| Keep all defaults unchanged | Preserves raw visibility | Analyst burnout and missed true positives | Structured baseline and phased tuning |

The objective is not “fewer alerts.” The objective is “better alerts.”

3) Required context before tuning begins

Tuning without environment context causes accidental over-suppression.

Context checklist

- Network zones and trust boundaries

- Critical assets and crown-jewel services

- Normal protocol/service profiles by zone

- Business-hour and maintenance-window patterns

- Known scanners and vulnerability assessment schedules

- Change-control calendar for infra/app updates

- Existing SOC escalation thresholds

Context mapping table

| Context Area | What to Capture | Why It Matters |

|---|---|---|

| Zone Model | Internal, DMZ, cloud, partner links | Same alert has different risk by zone |

| Asset Criticality | Business impact and service ownership | Drives prioritization and response urgency |

| Normal Traffic Patterns | Baseline ports/protocols/volumes | Distinguishes routine traffic from anomalies |

| Approved Scanners | Source ranges and windows | Prevents scanner noise from polluting queue |

| Change Events | Deployments, migrations, patch windows | Reduces false spikes during planned activity |

4) Practical SNORT tuning workflow

Treat tuning as an engineering cycle, not a one-time cleanup.

Step-by-step process

- Baseline current alerts

- Capture 2–4 weeks of alert trends by rule, source, destination, and zone.

- Classify noise vs potential signal

- Split recurring alerts into benign repetitive patterns, unknowns, and actionable candidates.

- Validate likely true positives

- Correlate with firewall, endpoint, and server logs before tuning decisions.

- Adjust thresholds and detection context

- Tune rate-based triggers and add context-based filtering where safe.

- Document suppressions with ownership and expiry

- Every suppression needs reason, approver, and review date.

- Review rule categories and coverage impact

- Confirm tuning does not remove detection in critical zones.

- Test changes in controlled rollout

- Validate impact in staging or phased production segment.

- Monitor and iterate

- Reassess alert quality and missed-detection indicators weekly.

5) SNORT rule lifecycle table (required)

| Lifecycle Stage | Purpose | Owner | Output |

|---|---|---|---|

| New Rule | Introduce signature/rule into monitoring set | Detection engineer | Rule metadata + deployment note |

| Observe | Watch alert behavior in real traffic | SOC analyst | Baseline alert profile |

| Tune | Adjust threshold/suppression/context | Detection engineer + SOC | Tuning change record |

| Validate | Confirm true-positive preservation and FP reduction | SOC lead | Validation report |

| Deploy | Promote tuned rule to production baseline | Platform owner | Approved release entry |

| Review | Periodic performance and relevance check | SOC + threat team | Rule performance scorecard |

| Retire | Remove obsolete or redundant rule | Detection governance owner | Retirement note and coverage mapping |

This lifecycle prevents “set and forget” detection decay.

6) Alert fields analysts should always capture

SOC quality improves when every alert review captures consistent fields.

| Field | Why It Matters |

|---|---|

| Rule SID / Signature name | Identifies rule behavior and tuning lineage |

| Timestamp (UTC) | Enables cross-system timeline alignment |

| Source IP / Port | Supports source profiling and campaign tracking |

| Destination IP / Port | Links to asset criticality and service context |

| Protocol | Distinguishes expected vs suspicious communication patterns |

| Zone / Sensor location | Adds trust-boundary context |

| Alert count / rate | Helps identify burst anomalies and noisy patterns |

| Action taken | Documents triage path and containment relevance |

| Correlated telemetry references | Supports confidence scoring and escalation quality |

Minimum triage note format

- Rule SID and alert summary

- Asset criticality and owner

- Correlated logs reviewed

- Confidence level (low/medium/high)

- Escalation or closure rationale

7) Integrating SNORT with Splunk, ELK, and Wazuh

SNORT is strongest when enriched by SIEM context and endpoint telemetry.

Integration goals

- Centralized alert visibility and deduplication

- Correlation with auth, endpoint, and firewall logs

- Risk scoring based on asset criticality and business context

- Faster incident handoff with linked evidence

Integration pattern table

| Platform | Practical Use | Output Benefit |

|---|---|---|

| Splunk | Alert aggregation, correlation searches, dashboards | Faster triage and trend visibility |

| ELK | Flexible enrichment pipelines and hunt pivots | Better investigation context and retention control |

| Wazuh | Endpoint + IDS alignment for host/network correlation | Higher confidence in escalation decisions |

Correlation examples (defensive)

- SNORT high-frequency auth-probe alerts + identity failed-login spikes

- SNORT web exploit pattern alert + WAF block/allow behavior changes

- SNORT suspicious outbound traffic alert + endpoint process anomaly

8) Metrics that show tuning quality

If tuning is not measured, it is mostly guesswork.

| Metric | Definition | Target Direction |

|---|---|---|

| Alert Volume | Total IDS alerts in period | Decrease with caution |

| True-Positive Rate | Confirmed incidents ÷ investigated alerts | Increase |

| False-Positive Rate | Non-actionable alerts ÷ investigated alerts | Decrease |

| Mean Time to Triage (MTTT) | Average analyst time to classify alert | Decrease |

| Coverage by Asset | % critical assets with meaningful IDS visibility | Increase |

| Suppression Review Compliance | % suppressions reviewed before expiry | Increase |

Track these monthly and tie major changes to specific tuning actions.

9) Common mistakes in SNORT tuning

- Suppressing too broadly to reduce queue pressure

- Keeping suppressions without owner or expiration date

- Ignoring asset criticality when tuning thresholds

- Failing to retest rules after infrastructure changes

- Tuning in production without staged validation

- Treating scanner noise as permanent baseline behavior

- Not documenting why rule changes were made

Fast guardrails

- No suppression without reason + owner + review date

- No category-wide disable without coverage impact review

- No threshold change without before/after metric snapshot

10) Monthly SNORT tuning checklist

| Weekly Cycle | Action | Deliverable |

|---|---|---|

| Week 1 | Baseline and trend review by top noisy SIDs | Top-noise report + criticality map |

| Week 2 | Validate suspected false positives with log correlation | FP validation log |

| Week 3 | Apply controlled threshold/suppression adjustments | Tuning change set + approvals |

| Week 4 | Measure impact and review missed-signal risk | Monthly tuning scorecard |

Monthly governance checklist

- Top 20 noisy rules reviewed

- All active suppressions have owner and expiry

- Critical asset coverage verified after changes

- True-positive and false-positive rates updated

- Incident lessons fed back into rule improvements

- Next month tuning priorities documented

A mature SNORT program does not chase silence. It continuously improves alert quality so analysts can act faster, miss less, and maintain visibility where operational risk is highest.

11) Alert acceptance criteria for tuned rules

Tuning decisions should be judged against consistent acceptance criteria, not analyst intuition alone.

| Acceptance Check | Pass Condition |

|---|---|

| Signal Quality | Alert explains behavior clearly enough for first-pass triage |

| Correlation Readiness | Alert contains fields that can link to SIEM/endpoint data |

| Noise Tolerance | False-positive rate stays within team-defined threshold |

| Critical Coverage | No loss of visibility on critical assets/zones |

| Documentation | Rule purpose, tuning reason, and owner are recorded |

If a tuned rule fails two or more acceptance checks, roll back or re-tune before broad deployment.

12) Quarterly SNORT governance cycle

Monthly tuning improves day-to-day quality; quarterly governance prevents long-term detection drift.

Quarterly governance actions

- Revalidate rule relevance against current threat trends

- Review suppressions that have exceeded intended lifetime

- Reassess sensor placement vs infrastructure changes

- Compare IDS coverage against vulnerability and incident trends

- Update ownership for rules tied to retired services

Governance scorecard

| Domain | Key Question |

|---|---|

| Coverage | Are critical assets still mapped to effective IDS visibility? |

| Quality | Are top noisy rules improving or recurring unchanged? |

| Ownership | Does every high-impact rule have an accountable owner? |

| Responsiveness | Are incident lessons converted into rule updates quickly? |

A tuned SNORT environment stays healthy when rule engineering, analyst feedback, and governance review operate as one loop.

Tuning operations worksheet for IDS teams

| Workstream | Owner | First Action | Validation Signal |

|---|---|---|---|

| Baseline discipline | Detection lead | Capture top noisy SIDs with context tags | Consistent baseline trend data available |

| Suppression governance | SOC manager | Enforce owner + expiry for every suppression | Suppression debt reduces over time |

| Correlation integration | SIEM engineer | Map SNORT alerts to auth/endpoint context | Higher triage confidence in escalations |

| Coverage assurance | Security architect | Review tuned rules against critical assets | No high-risk blind spots introduced |

Execution checklist

- Review top noisy alerts weekly with root-cause tagging

- Document all threshold changes with reason and impact notes

- Revalidate tuned rules after major infra/app changes

- Track missed-detection indicators alongside noise reduction

Alert review handoff bundle

| Artifact | Minimum Content | Consumer |

|---|---|---|

| Rule change log | SID, change type, owner, date, rationale | Detection governance |

| Tuning impact note | Before/after volume and quality snapshot | SOC leadership |

| Coverage map | Asset/zone visibility after tuning | Security architecture |

| Follow-up queue | Rules requiring additional validation | Detection engineering |

Quality checks

- Did alert volume drop without losing critical-signal coverage?

- Are changed rules reproducible and peer-reviewable?

- Are suppressions still justified by current environment behavior?

90-day IDS tuning cadence

Days 1–30

- Build and validate baseline by rule family and zone

- Apply narrow high-impact tuning fixes

- Publish first monthly quality scorecard

Days 31–60

- Expand correlation with SIEM and endpoint telemetry

- Remove stale suppressions and reset ownership where missing

- Track true-positive lift after tuning changes

Days 61–90

- Run quarterly governance review and rule relevance audit

- Update tuning standards from incident lessons learned

- Publish next-quarter rule improvement priorities

| KPI | Why It Matters |

|---|---|

| False-positive reduction rate | Measures tuning effectiveness |

| True-positive confirmation rate | Ensures detection value remains strong |

| Suppression with valid owner/expiry | Reflects governance maturity |

| Coverage against critical assets | Guards against blind spots |

IDS tuning matures when performance metrics, ownership discipline, and threat-informed rule evolution stay tightly connected.

Tuning workflow with change control (how mature teams do it)

False-positive reduction should never be random edits to production rules. Treat tuning like engineering.

Rule change record (minimal)

| Field | Example |

|---|---|

| Rule SID | 1:2024210 |

| Change type | threshold / suppress / content update |

| Reason | “High-volume benign scanner from approved subnet” |

| Evidence | Alert samples, packet extracts, timeframe |

| Risk | What might be missed after the change |

| Reviewer | Name/team |

Test harness approach

- Maintain a small set of known-good PCAPs and benign traffic captures.

- For high-risk changes, replay test traffic in a staging sensor.

- Validate that you still catch “must-detect” scenarios after tuning.

Performance and stability metrics

| Metric | Use |

|---|---|

| Alerts/1k packets | Tracks noise relative to traffic |

| CPU/memory per sensor | Prevents performance regressions |

| Top noisy signatures | Focuses tuning where it matters |

| Suppression count | Signals drift and overfitting |

When to tune vs when to fix telemetry

- Tune only after confirming the traffic is expected and documented.

- If the alert exists because of misconfigured logging or broken parsing, fix ingestion first.

- If the detection logic is conceptually wrong, rewrite rather than suppress.

This keeps Snort tuning professional: documented changes, testable behavior, and measurable outcomes.