Severity labels are easy to assign and easy to misuse. The hard part is writing findings that engineers can fix, managers can prioritize, and executives can understand without losing technical truth.

CVSS helps when used as a scoring framework, not as a shortcut for thinking. A high-quality report ties CVSS to evidence, business context, remediation ownership, and retest outcomes.

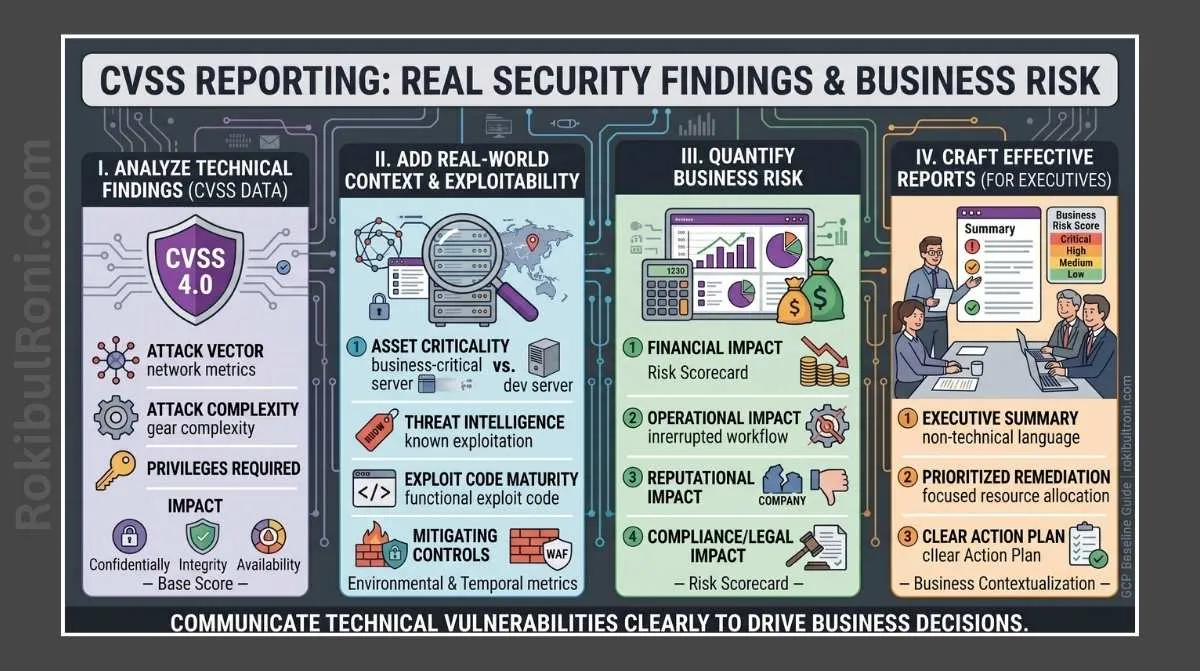

CVSS reporting for real security findings

Use this workflow to produce findings that are accurate, credible, and operationally useful.

1) Why severity is not just a scanner label

Scanner output can suggest priority, but it rarely captures architecture context, compensating controls, business impact, or exploitability constraints in your environment.

Practical severity reality check

- Automated severity is a starting signal, not final truth

- CVSS base score reflects technical characteristics, not business priority

- Environmental context can raise or lower practical urgency

- Clear evidence quality often matters as much as score precision

- Retest status determines whether risk is still active

Technical vs business interpretation

| Dimension | What It Represents | Typical Mistake |

|---|---|---|

| CVSS Base Severity | Technical characteristics of the vulnerability | Treating score as the only priority factor |

| Exploitability Context | How reachable and realistic exploitation is in this environment | Ignoring access constraints and trust boundaries |

| Business Impact | Operational, legal, financial, or reputational consequence | Writing vague impact without business mapping |

| Remediation Priority | Sequencing based on risk + effort + service criticality | Prioritizing only by scanner rank |

2) What CVSS is, and what it is not

CVSS is a standardized way to express technical severity using defined metrics. It improves consistency across findings when analysts apply it carefully.

CVSS is not a full risk model. It does not automatically understand your regulatory exposure, customer impact, incident readiness, or compensating controls unless those are documented separately.

Use CVSS correctly

- Keep score calculation transparent and reproducible

- Document vector string with each finding

- Separate technical severity from business decision language

- State assumptions where evidence is incomplete

3) Finding structure that drives action

If a finding is missing owner context, endpoint/asset clarity, or remediation detail, it will stall no matter how accurate the score is.

Practical finding structure

- Title: specific behavior, not generic vulnerability family alone

- Affected asset: hostname/service/application/component

- Severity: CVSS score + vector + severity band

- Description: what was observed in controlled testing

- Evidence summary: concise proof with redacted artifacts

- Business impact: plain-language operational consequence

- Remediation: implementable steps with likely owner team

- References: relevant standard or internal control mapping

- Owner/SLA: accountable team and expected fix window

- Retest status: pending, passed, failed, or partial with date

Report-ready finding card table

| Field | Minimum Quality Bar |

|---|---|

| Title | Clearly describes insecure behavior and affected function |

| Affected Asset | Includes exact service/endpoint/environment |

| CVSS | Score and vector documented in report body |

| Evidence | Timestamped and reproducible in safe terms |

| Business Impact | States what can happen to operations/data/users |

| Remediation | Actionable and assigned to a logical owner |

| Retest | Explicit status with date and tester notes |

4) Weak vs strong finding titles (before/after)

A title should explain risk behavior immediately. If readers need to open five sections to understand the issue, the title is too weak.

| Weak Title | Strong Title |

|---|---|

| ”Broken Access Control" | "Invoice Export Endpoint Allows Cross-Tenant Data Access for Standard User Role" |

| "Sensitive Data Exposure" | "Account Profile API Returns Full National ID Field to Low-Privilege Session" |

| "Authentication Issue" | "Password Reset Token Remains Valid After Successful Password Change" |

| "Rate Limit Missing" | "Login API Accepts Sustained High-Volume Requests Without Backoff Controls” |

5) CVSS base metrics in plain language

Use this table in internal reporting guides so junior analysts score consistently.

| CVSS Base Metric | Plain-Language Meaning | Reporting Tip |

|---|---|---|

| Attack Vector (AV) | Where the attacker must be (network, adjacent, local, physical) | Explain practical reachability in your environment |

| Attack Complexity (AC) | How difficult conditions are for success | Note if unusual prerequisites reduce realism |

| Privileges Required (PR) | Access level needed before attack | Tie to role model and identity controls |

| User Interaction (UI) | Whether another user action is required | Describe realistic likelihood of that interaction |

| Scope (S) | Whether impact crosses security boundaries | Clarify system-to-system blast radius |

| Confidentiality (C) | Potential data exposure impact | Map to data classification where possible |

| Integrity (I) | Potential unauthorized modification impact | Link to transaction or workflow trust effects |

| Availability (A) | Potential service disruption impact | Describe user/business service effect clearly |

6) Contextual adjustments that change priority decisions

Two findings with similar CVSS scores can have very different remediation priority once business context is applied.

Context factors to document explicitly

- Internet exposure vs internal-only segment

- Sensitive data class (customer records, regulated data, credentials)

- Asset criticality (revenue path, identity platform, operational dependency)

- Compensating controls (WAF, segmentation, strong monitoring, workflow constraints)

- Detection and response readiness (alert quality, containment speed)

Context adjustment table

| Context Signal | Priority Effect | How to Capture in Report |

|---|---|---|

| Internet-facing critical API | Increases urgency | Note exposure path and business dependency |

| Strong compensating control with validated efficacy | May lower immediate urgency | Document control validation evidence |

| Regulated data present | Increases compliance and legal impact | Map to policy/compliance obligations |

| Isolated low-criticality sandbox | May reduce operational urgency | Confirm no production data or trust linkage |

| Repeated finding category across teams | Increases program-level risk | Flag as systemic control gap |

7) Communicating with executives without hiding technical detail

Executive readers need decision-ready clarity: what matters, why it matters now, and what is being done.

Executive-friendly pattern

- Start with business effect, not tool output

- State technical risk in one plain-language sentence

- Show remediation ownership and expected timeline

- Include residual risk if not fully fixed yet

- Keep appendix for deep technical artifacts

One-paragraph leadership format

“A permission check weakness in the customer billing API allows unauthorized viewing of other tenant invoice metadata under specific authenticated conditions. The issue is scored high technically and affects externally exposed production services tied to finance workflows. Engineering ownership is assigned, remediation is in progress, and retest is scheduled for the next release gate.”

That format keeps decision-makers aligned without removing precision from technical sections.

8) Common reporting mistakes that reduce credibility

- Inflating severity without clear technical basis

- Writing impact claims that are dramatic but unsupported

- Omitting CVSS vectors while presenting only numeric score

- Pasting raw scanner output without analyst interpretation

- Leaving remediation vague or non-actionable

- Skipping owner assignment and SLA expectation

- Publishing findings without retest status updates

- Ignoring uncertainty and assumptions in complex environments

9) Reusable finding template for day-to-day reporting

Use this template as a default reporting block for vulnerability management and penetration testing outputs.

| Section | Template Prompt |

|---|---|

| Finding Title | What insecure behavior occurs, where, and under which role/context? |

| Affected Asset/Endpoint | Which exact system, endpoint, method, and environment are affected? |

| Severity (CVSS) | What is the score, vector, and rationale for each key metric choice? |

| Description | What was observed during authorized testing in concise technical terms? |

| Evidence Summary | Which redacted artifacts prove the issue reproducibly and safely? |

| Business Impact | What can this issue cause for operations, users, compliance, or trust? |

| Remediation Guidance | What concrete engineering steps should be implemented first? |

| Owner & SLA | Which team owns the fix, and what timeline is expected? |

| Retest Status | Is it pending, passed, failed, or partially fixed with date and notes? |

Fast quality gate before publishing

- Does the title communicate behavior and scope clearly?

- Is CVSS vector present and internally consistent?

- Is business impact specific and evidence-backed?

- Can engineering execute remediation without extra clarification?

- Is retest plan visible and accountable?

Consistent CVSS reporting is less about perfect wording and more about disciplined structure, honest evidence, and clear accountability across technical and business teams.

10) Practical SLA mapping without severity inflation

SLA decisions should combine technical severity and business context without distorting CVSS scoring.

| Finding Profile | Suggested SLA Direction | Coordination Priority |

|---|---|---|

| High severity + internet exposure + critical asset | Fast-track remediation window | Executive + engineering owner alignment |

| Medium severity + strong compensating controls | Standard remediation cycle | Engineering owner with periodic security check |

| Low severity + low criticality | Planned backlog remediation | Product/security governance review |

| Recurrent control weakness across teams | Program-level remediation initiative | Security architecture + platform leadership |

Use SLA language as prioritization governance, not as a substitute for evidence quality.

11) Closure states that improve audit and retest clarity

Many reports fail at closure because status labels are ambiguous. Standardize closure states early.

| Closure State | Meaning |

|---|---|

| Open | Risk confirmed and remediation not started |

| In Progress | Remediation underway, retest pending |

| Mitigated Pending Retest | Control change deployed, validation scheduled |

| Closed (Retest Passed) | Risk no longer reproducible in approved retest |

| Accepted Risk | Business owner accepts residual risk formally |

A disciplined closure model makes CVSS reporting more useful for security operations, audit teams, and leadership follow-up.

Reporting operations worksheet

| Workstream | Owner | First Action | Validation Signal |

|---|---|---|---|

| Severity consistency | Report lead | Enforce CVSS vector in every finding | Zero findings with score but no vector |

| Business context mapping | Risk owner | Add data/asset criticality tags | Impact statements become decision-ready |

| Remediation quality | Engineering liaison | Require concrete implementation actions | Reduced clarification loops from dev teams |

| Closure governance | Security PM | Track status transitions with evidence | Audit-ready closure history for each issue |

Operating checklist

- No severity assignment without documented rationale

- No closure without retest or formal risk acceptance

- No executive summary without prioritized business impact themes

- No remediation item without owner and target timeline

Executive and engineering handoff model

| Handoff Artifact | Audience | Purpose |

|---|---|---|

| Risk snapshot page | Leadership | Prioritization and funding decisions |

| Technical findings package | Engineering | Implementation and retest execution |

| SLA tracker | Security governance | Follow-through and accountability |

| Closure report | Audit/risk teams | Evidence of risk reduction lifecycle |

Communication quality checks

- Are top risks clearly tied to business functions?

- Are technical details precise enough for implementation?

- Are residual risks explicit where full remediation is not complete?

90-day reporting maturity plan

Days 1–30

- Standardize finding template across all report types

- Align CVSS methodology and reviewer calibration session

- Build shared glossary for severity and business-impact wording

Days 31–60

- Introduce closure-state governance with retest evidence rules

- Improve executive summary format for decision clarity

- Track remediation response times by finding class

Days 61–90

- Run quarterly report quality review and peer audit

- Reduce repeat writing issues through template refinements

- Publish reporting KPI dashboard to leadership

| KPI | Why It Matters |

|---|---|

| Findings with complete CVSS vectors | Measures technical scoring discipline |

| Findings with actionable remediation | Indicates engineering usability |

| Retest-backed closure rate | Validates real risk reduction |

| Average remediation cycle by severity | Shows program response maturity |

CVSS reporting becomes high-value when scoring, communication, remediation, and closure are treated as one operational pipeline.

Scoring governance that executives and engineers both trust

CVSS becomes credible when your organization can explain how the score was derived, when it was adjusted, and why a decision was made.

1) Maintain a CVSS decision log

Record scoring decisions the same way you would record architectural decisions.

| Field | Example |

|---|---|

| Finding ID | WEB-012 |

| Vector | CVSS:3.1/AV:N/AC:L/PR:N/UI:N/S:U/C:H/I:H/A:N |

| Key assumptions | “Endpoint reachable only from corporate IP ranges” |

| Confirmed evidence | “ACL shows allowlist; request blocked off-VPN” |

| Adjustments | “Environmental score lowered due to network controls” |

| Owner/date | “Security Eng / 2026-02-10” |

2) Define when you allow overrides

Overrides should be rare and explicit:

- Environmental controls that are verified (not assumed).

- Asset criticality and data classification that are documented.

- Exposure changes (internal-only vs public) that are measured.

If you can’t demonstrate the control with evidence, don’t override the base score.

3) Communication matrix (who gets what)

| Audience | Needs | Deliverable |

|---|---|---|

| Engineering | Repro steps + fix guidance | Technical finding write-up |

| Product/Owners | Risk framing + timeline | One-paragraph risk summary |

| Executives | Trend + top drivers | Quarterly dashboard and narrative |

| Audit/Compliance | Traceability | Decision log + evidence links |

4) Report QA checklist

- Score matches the stated impact and evidence.

- Exploitability assumptions are stated, not implied.

- Remediation advice is feasible and prioritized.

- Retest criteria and closure definition are included.

This governance layer makes CVSS reporting professional: repeatable, explainable, and defensible across stakeholders.